Legacy Plugins

Jenkins Artifactory Plug-in

We have recently released a new next-gen plugin - the Jenkins JFrog Plugin . The new plugin can be installed and used side by side with the Jenkins Artifactory plugin.

Migrate to the Jenkins JFrog Plugin

If you're already using the Artifactory Plugin, we recommend also installing the JFrog Plugin, and gradually migrate your jobs from the old plugin to the new one. You can also have your existing jobs use the two plugins. The old plugin will continue to be supported, however new functionality will most likely make it into the new plugin only.

Why Did We Create the Jenkins JFrog Plugin?

We want to ensure that the Jenkins plugin continues receiving new functionality and improvements. that are added very frequently to JFrog CLI. JFrog CLI already includes significantly more features than the Jenkins Artifactory Plugin. The new JFrog plugin will be receiving these updates automatically.

How is the Jenkins JFrog Plugin Different from the Jenkins Artifactory Plugin?

Unlike the Jenkins Artifactory plugin, the Jenkins JFrog plugin completely relies on JFrog CLI, and serves as a wrapper for it. This means that the APIs you'll be using in your Pipeline jobs look very similar to JFrog CLI commands. The Jenkins JFrog plugin also does not support UI based Jenkins jobs, such as Free-Style jobs. It supports Pipeline jobs only.

How do I get Started?

Read the JFrog Plugin documentation to get started.

Plug-in Version 4.0.0 is available now

We have recently released a major version of the Jenkins Artifactory plugin - version 4.0.0.

This release includes the breaking change - Builds that utilize Gradle version below 6.8.1 are no longer supported.

The reason for this change is to support the Gradle Version Catalog feature.

The popular Jenkins Artifactory Plugin brings Artifactory's Build Integration support to Jenkins.

This integration allows your build jobs to deploy artifacts and resolve dependencies to and from Artifactory, and then have them linked to the build job that created them. The plugin includes a vast collection of features, including a rich pipeline API library and release management for Maven and Gradle builds with Staging and Promotion.

The plugin currently require version 2.159 or above of Jenkins.

Get started with configuring the Jenkins Artifactory Plug-in.

Learn More

Jenkins Cheat Sheet for Jenkins Pipelines and Continuous Integration

Watch the Screencast

Integrate of the Jenkins Artifactory Plug-in with JFrog Pipelines

JFrog Pipelines integration with Jenkins is supported since version 1.6 of JFrog Pipelines and version 3.7.0 of the Jenkins Artifactory Plugin. This integration allows triggering a Jenkins job from JFrog Pipelines. The Jenkins job is triggered using JFrog Pipeline's native Jenkins step. When the Jenkins job finishes, it reports the status to back to JFrog Pipelines.

The integration supports:

- Passing build parameters to the Jenkins job.

- Sending data from Jenkins back to JFrog Pipelines.

Set Up the Integration of Jenkins Artifactory Plug-in with Pipelines

-

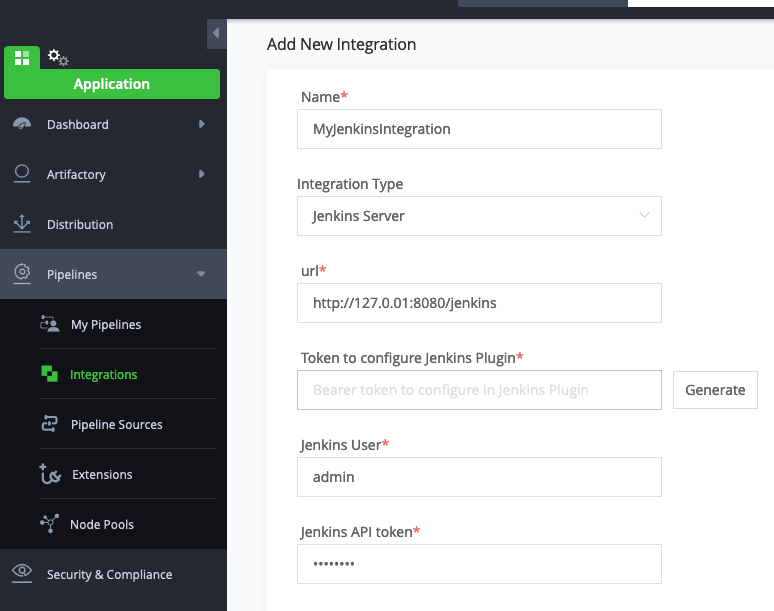

Open the JFrog Pipelines UI.

-

Under Integrations, click on the_Add an Integration_button.

-

Choose _Jenkins Server_integration type and fill out all of the required fields.

-

Click on the _Generate_button to generate a token. This token is used by Jenkins to authenticate with JFrog Pipelines.

-

Copy the Callback URL and Token, and save them in Jenkins | Manage | Configure System | JFrog Pipelines server

Trigger a Jenkins Job from JFrog Pipelines

To trigger a Jenkins job from JFrog Pipelines, add the Jenkins step In your pipelines yaml as shown here.

- Name: MyJenkinsStep

Type: Jenkins

configuration:

jenkinsJobName: <jenkins-job-name>

Integrations:

- name: MyJenkinsIntegrationOnce the Jenkins job finishes, it will report back the status to the Jenkins step.

More Options with the Jenkins Artifactory Plug-in

For pipelines jobs in Jenkins, you also have the option of sending info from Jenkins back to JFrog Pipelines. This info will be received by JFrog Pipelines as output resources of the Jenkins step in JFrog Pipelines. Here's how you add those resources to the Jenkins pipeline script:

jfPipelines (

outputResources: """[

{

"name": "pipelinesBuildInfo",

"content": {

"buildName": "${env.JOB_NAME}",

"buildNumber": "${env.BUILD_NUMBER}"

}

}

]"""

)By default, the Jenkins job status and the optional output resources are sent by Jenkins to JFrog Pipelines when the job finishes. You also however have the option of sending the status and output resources before the Jenkins job ends. Here's how you do it:

jfPipelines (

reportStatus: "SUCCESS"

)After Jenkins reports the status back to JFrog Pipelines, it does not wait for any response from JFrog Pipelines, and the job continues to the next step immediately.

You also have the option of putting the reportStatus and outputResources together as follows:

jfPipelines (

reportStatus: "SUCCESS",

outputResources: """[

{

"name": "pipelinesBuildInfo",

"content": {

"buildName": "${env.JOB_NAME}",

"buildNumber": "${env.BUILD_NUMBER}"

}

}

]"""

)The supported statuses are SUCCESS, UNSTABLE, FAILURE, NOT_BUILT or ABORTED.

The Jenkins job will report the status to JFrog Pipelines only once. If you don't report the status as part fo the pipelines script as shown above, Jenkins will report it when the job finishes.

Multi-Configuration (Freestyle) Projects with the Jenkins Artifactory Plug-In

A multi-configuration project can be used to avoid duplicating many similar steps that would otherwise be made by different builds.

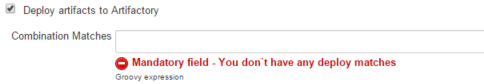

The plugin is used in the same way as the other Freestyle builds, but under "Deploy artifacts to Artifactory" you will find a mandatory *Combination Matches *field where you can enter the specific matrix combinations to which the plugin will deploy the artifacts.

Combination Matches field

Here you can specify build combinations that you want to deploy through a Groovy expression that returns true or false.

When you specify a Groovy expression here, only the build combinations that result in true will be deployed to Artifactory. In evaluating the expression, multi-configuration axes are exposed as variables (with their values set to the current combination evaluated).

The Groovy expression uses the same syntax used in Combination Filter under Configuration Matrix

For example, if you are building on different agents for different jdk`s you would specify the following:

| Deploy "if both linux and jdk7, it's invalid " | !(label=="linux" && jdk=="jdk7" |

|---|---|

| Deploy "if on master, just do jdk7 " | (label=="master").implies(jdk=="jdk7") |

Important Note

Deployment of the same Maven artifacts by more than one matrix job is not supported!

Trigger Builds with the Jenkins Artifactory Plug-in

The Artifactory Trigger allows a Jenkins job to be automatically triggered when files are added or modified in a specific Artifactory path. The trigger periodically polls Artifactory to check if the job should be triggered.

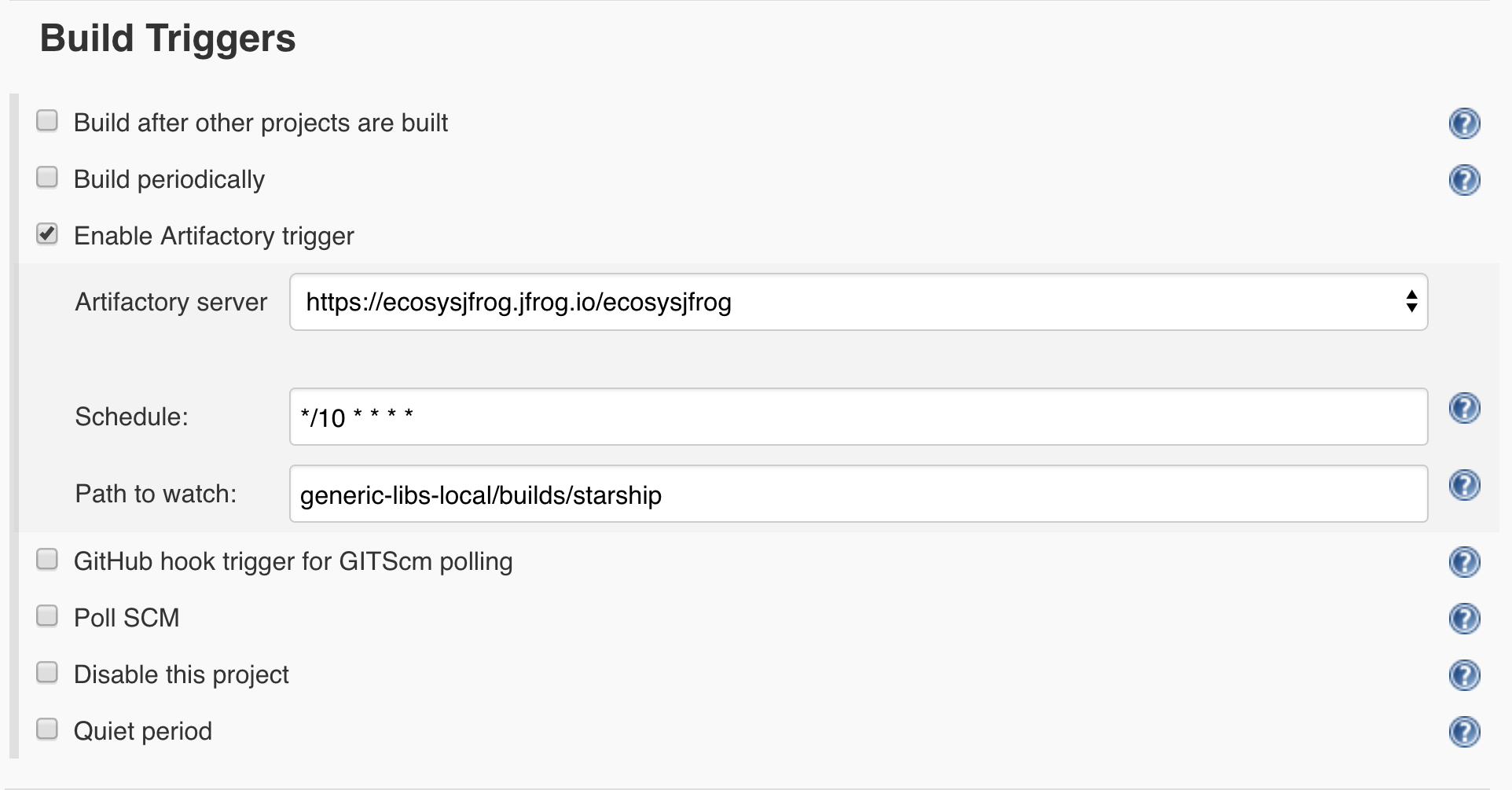

To enable the Artifactory trigger, follow these steps:

-

In the Jenkins job UI, go to Build Triggers, and check the Enable Artifactory trigger checkbox.

-

Select an Artifactory server.

-

Define a cron expression in the Schedule field. For example, to pull Artifactory every ten minutes, set ***/10 * * * ***

-

Set a Path to watch. For example, when setting generic-libs-local/builds/starship, Jenkins polls the /builds/starship folder under the generic-libs-local repository in Artifactory for new or modified files.

JIRA Integration and the Jenkins Artifactory Plug-in

Note

JIRA Integration is supported only in Free-Style and Maven jobs.

Pipeline jobs support a more generic integration, which allows integrating with any issue tracking system. See the Collecting Build Issues section in Declarative and Scripted Pipeline APIs documentation pages.

The Jenkins plugin may be used in conjunction with the Jenkins JIRA plugin to record the build's affected issues, and include those issues in the Build Info descriptor inside Artifactory and as searchable properties on deployed artifacts.

The SCM commit messages must include the JIRA issue ID. For example:HAP-007 - Shaken, not stirred

To activate the JIRA integration, make sure that Jenkins is set with a valid JIRA site configuration and select the Enable JIRA Integration in the job configuration page:

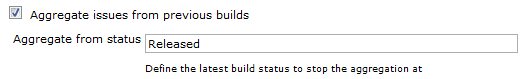

Aggregate Issues from Previous Builds

It is possible to collect under a build deployed to Artifactory all JIRA issues affected by this build as well as previous builds. This allows you, for example, to see all issues between the previous release to the current build, and if the build is a new release build - to see all issues addresses in the new release.

To accumulate JIRA issues across builds, check the "Aggregate issues from previous builds" option and configure the last build status the aggregation should begin from. The default last status is "Released" (case insensitive), which means aggregation will begin from the first build after the last "Released" one.

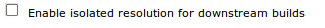

Build Isolation and the Jenkins Artifactory Plugin

Note

Build Isolation is currently supported only in Free-Style and Maven jobs.

When executing the same chain of integration (snapshot) builds in parallel, a situation may arise in which downstream builds resolve snapshot dependencies which are not the original dependencies existing when the build was triggered.

This can happen when a root upstream build has run and triggered downstream builds that depend on its produced artifacts. Then the upstream has run again before the running downstream builds has finished, so these builds may resolve newly created upstream artifacts that are not meant for them, leading to conflicts.

Solution

The Jenkins plugin offers a new checkbox for its Maven/Gradle builds 'Enable isolated resolution for downstream builds' which plants a new 'build.root' property that is added to the resolution URL.

This property will then be read by the direct children of this build and implanting them in their resolution URLs respectively, thus guaranteeing that the parent artifact resolved is the one that was built prior to the build being run.

- Maven: In order for Maven to use the above feature, the checkbox needs to be checked for the root build only, and make sure that all artifacts are being resolved from Artifactory by using the 'Resolve artifacts from Artifactory' feature. This will enforce Maven to use the resolution URL with Maven builds, alongside with the 'build.root' property as a matrix param in the resolution URL.

- Gradle: Once the 'Enable isolated resolution for downstream builds' has been checked, the build.root property will be added to all existing resolvers.

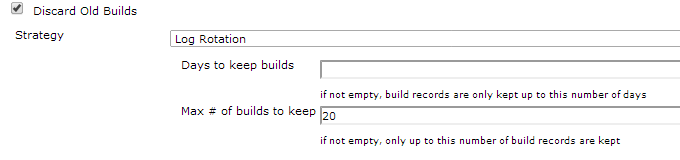

Discard Old Builds

Note

To use this functionality in pipeline jobs, please refer to one of the Triggering Build Retention sections in the Artifactory Pipeline APIs documentation page.

The Jenkins project configuration lets you specify a policy for handling old builds.

You can delete old builds based on age or number as follows:

| Days to keep builds | The number of days that a build should be kept before it is deleted |

|---|---|

| Max # of builds to keep | The maximum number of builds that should be kept. When a new build is created, the oldest one will be deleted |

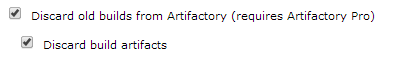

Once these parameters are defined, in the Post-build Actions section, you can specify that Artifactory should also discard old builds according to these settings as follows:

| Discard old builds from Artifactory | Configures Artifactory to discard old builds according to the Jenkins project settings above |

|---|---|

| Discard build artifacts | Configures Artifactory to also discard the artifacts within the build |

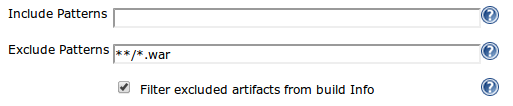

Excluded Artifacts and the BuildInfo

By default when providing exclude patterns for artifacts, they will not get deployed into Artifactory but they will get included in the final BuildInfo JSON.

By marking the "Filter excluded artifacts from build Info" the excluded artifacts will appear in a different section inside the BuildInfo and by this providing a clear understanding of the entire Build.

This is also crucial for the promotion procedure, since it scans your BuildInfo JSON and trying to promote all the artifacts there, it will fail when you excluded artifacts unless you mark this option.

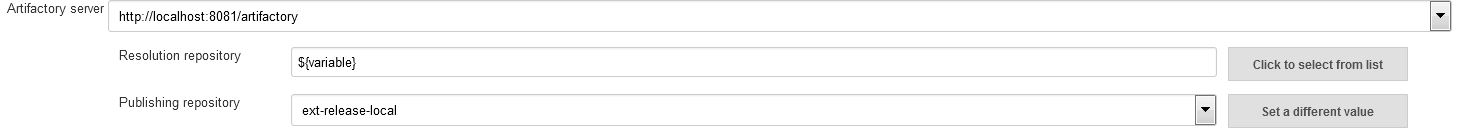

Configure Repositories with Variables

This section is relevant for Free-Style and Maven jobs only,.

You can select text mode in which you can type out your target repository.

In your target repository name, you can use variables that will be dynamically replaced with a value at build time.

The variables should be specified with a dollar-sign prefix and be enclosed by curly brackets.

For example: ${deployRepository}, ${resolveSnapshotRepository}, ${repoPrefix}-${repoName} etc.

The variables are replaced by values from one of the following job environments:

- Predefined Jenkins environment variables.

- Jenkins properties (Read more in Jenkins Wiki)

- Parameters configured in the Jenkins configuration under the "This build is parameterized" section - these parameters could be replaced by a value from the UI or using the Jenkins REST API.

- Injected variables via one of the Jenkins plugins ("EnvInject" for example).

Dynamically Disable Deployment of Artifacts and Build-info

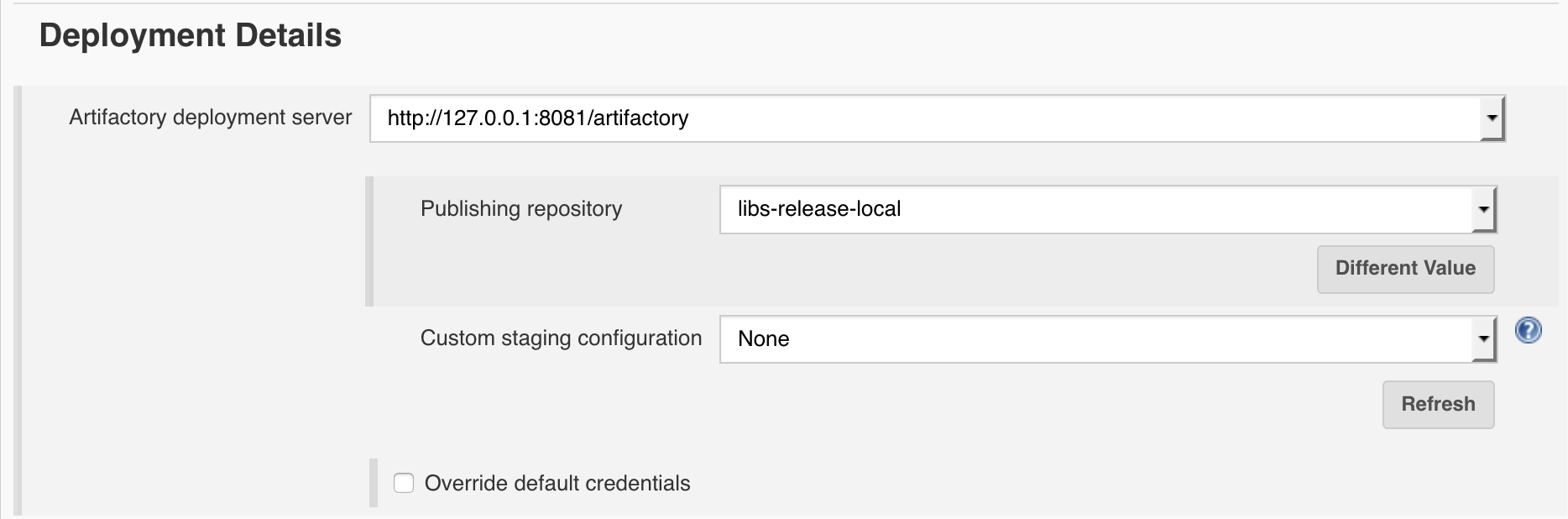

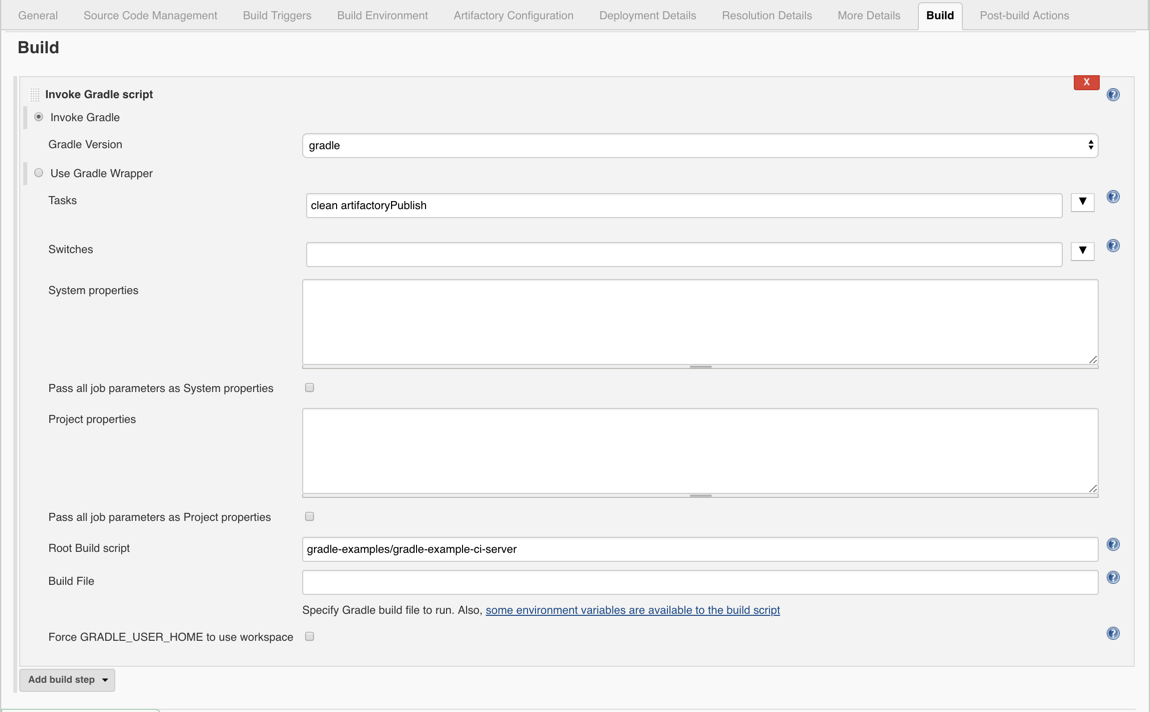

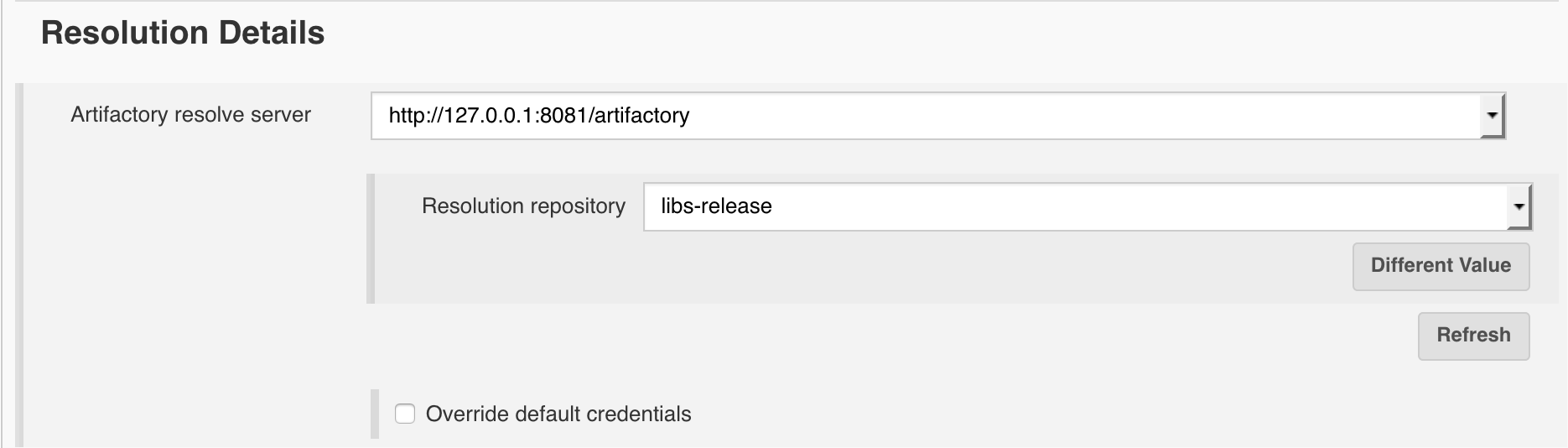

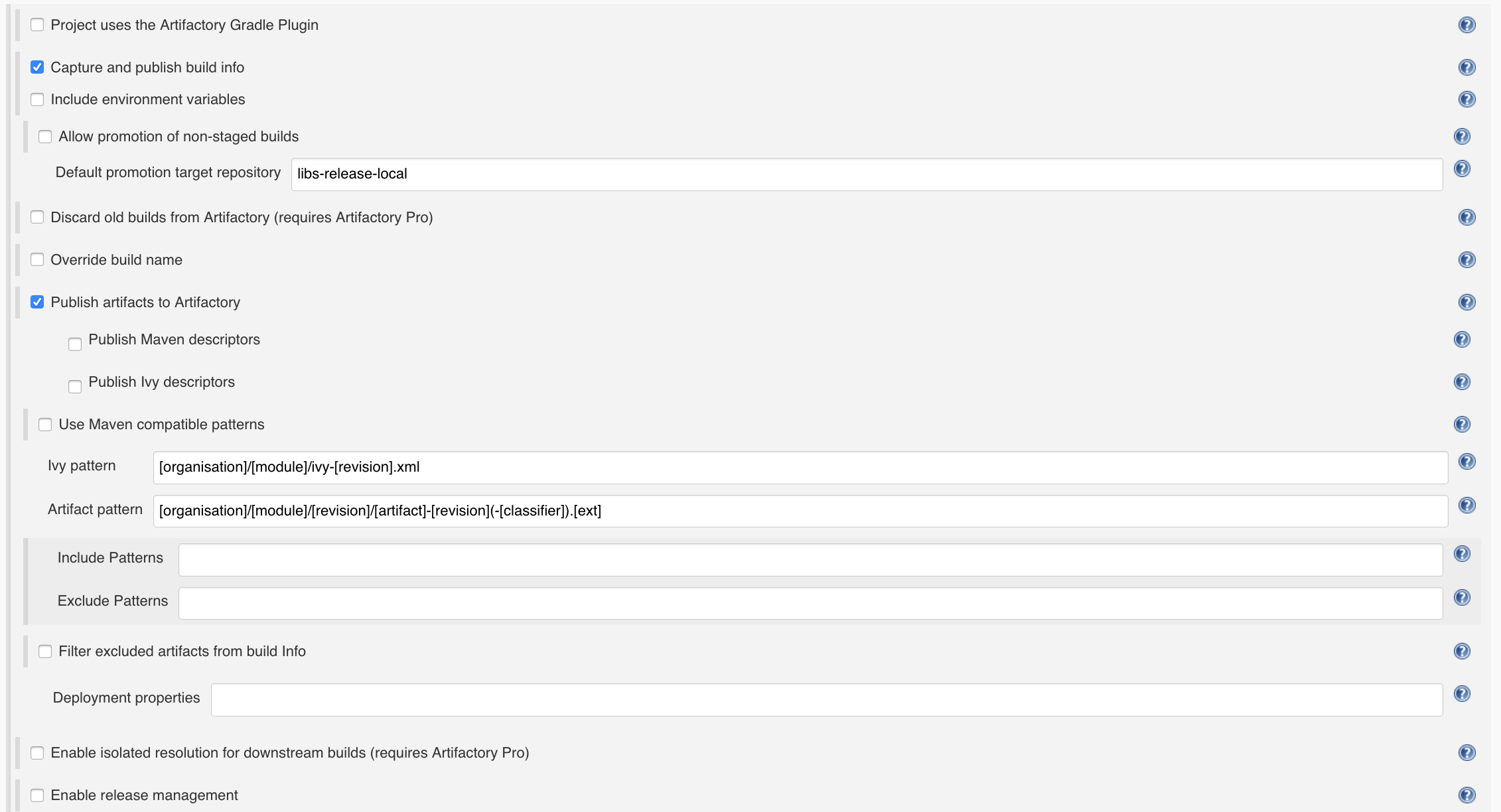

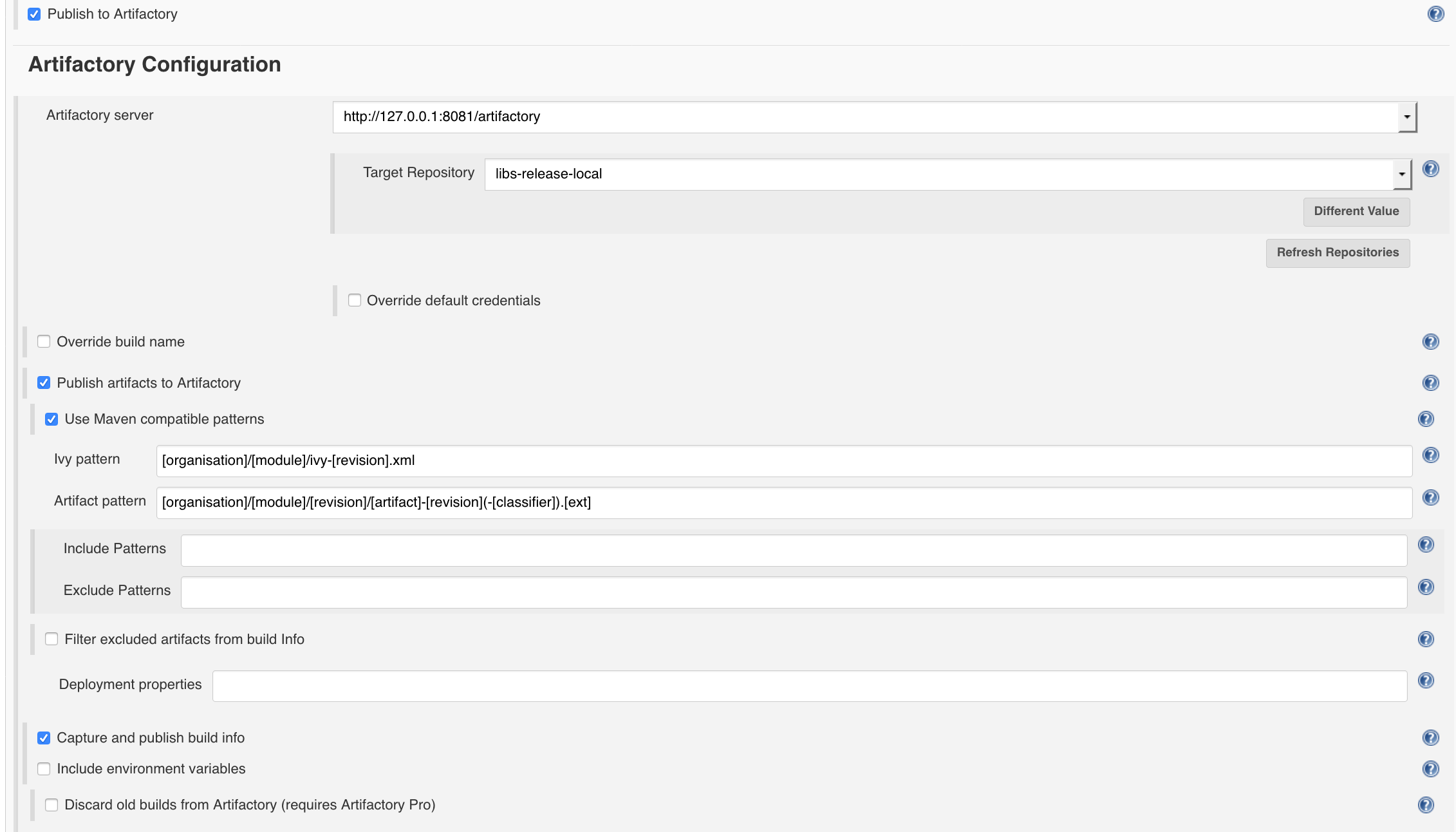

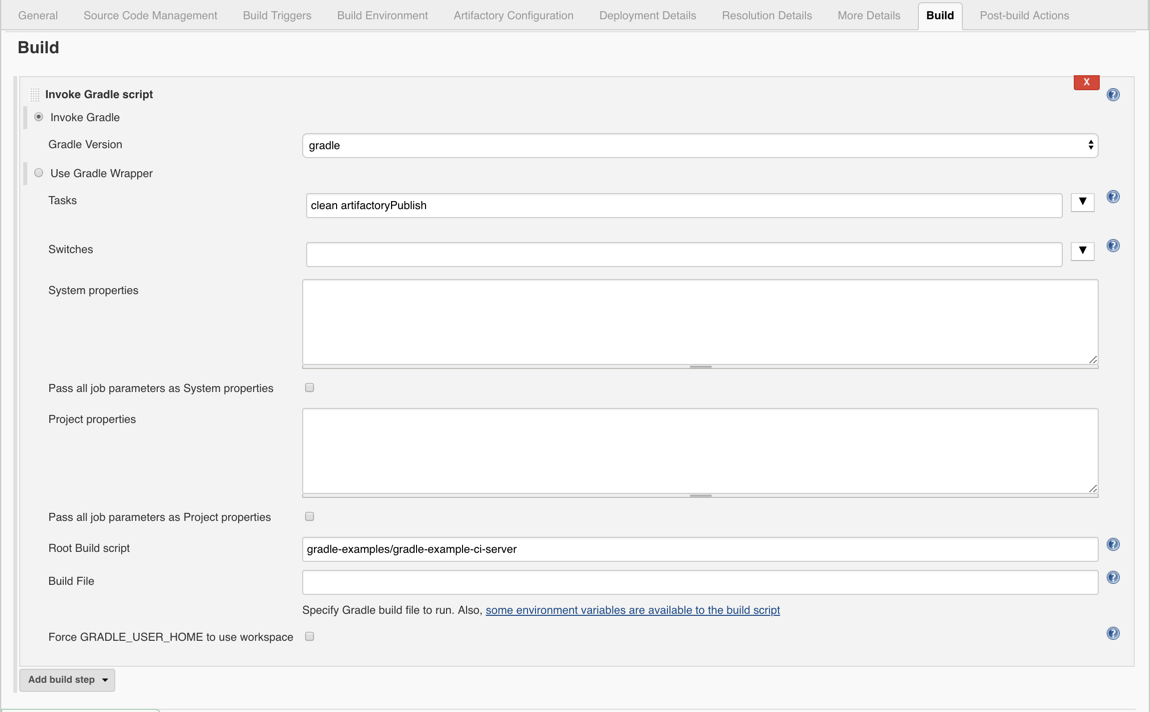

Maven, Gradle and Ivy builds can be configured to deploy artifacts and/or build information to Artifactory. For example, in the case of Gradle builds, you would set the Publishing repository field and check Capture and publish build-info. Maven and Ivy have similar (although slightly different) configuration parameters. By setting these parameters, you can configure each build tool respectively to deploy build artifacts and/or build information to Artifactory. However, there may be specific cases in which you want to override this setting and disable deploying artifacts and build information. In these cases, you can pass the following two system properties to any of the build tools:

-

artifactory.publish.artifacts

-

artifactory.publish.buildInfo

For example, for a Maven build, you can add these system properties to the Goals and options field as follows:

clean install -Dartifactory.publish.artifacts=false -Dartifactory.publish.buildInfo=falseTo control these properties dynamically, you can replace the values with Jenkins variables or Environment variables that you define as follows

clean install -Dartifactory.publish.artifacts=$PUBLISH_ARTIFACTS -Dartifactory.publish.buildInfo=$PUBLISH_BUILDINFOUse the Jenkins Job DSL Plugin

The Jenkins Job DSL plugin allows the programmatic creation of jobs using a DSL. Using the Jenkins Job DSL plugin, you can create Jenkins jobs to run Artifactory operations. To learn about the Jenkins Job DSL, see the Job DSL Tutorial.

To view Seed job examples and instructions for each type of Jenkins jobs, see jenkins-job-dsl-examples.

Release Notes for the Jenkins Artifactory Plug-in

Note

The release notes for versions 3.18.1 and above are available here.

4.0.0

Breaking Change - Builds that utilize Gradle version below 6.8.1 are no longer supported.

The reason for this change is to support the Gradle Version Catalog feature.

3.18.0 (29 December 2022)

- Support excluding build info module properties https://github.com/jfrog/jenkins-artifactory-plugin/pull/720

- Add support for virtual and federated repositories in UI jobs. https://github.com/jfrog/jenkins-artifactory-plugin/pull/726

- Filter suspected secrets from env vars values https://github.com/jfrog/jenkins-artifactory-plugin/pull/732

- Gradle - Publish bom files when ‘java-platform’ plugin applied https://github.com/jfrog/build-info/pull/680

- Maven - Remove the dummy remote repository. https://github.com/jfrog/build-info/pull/683

3.17.4 (1 December 2022)

- Bug fix - ConcurrentModificationException in UnifiedPromoteBuildAction https://github.com/jfrog/jenkins-artifactory-plugin/pull/710

- Make the Ivy plugin optional https://github.com/jfrog/jenkins-artifactory-plugin/pull/714

3.17.3 (10 November 2022)

- Support Maven opts from jvm.config file https://github.com/jfrog/jenkins-artifactory-plugin/pull/708

- Bug fix - Remove custom exception for unknown violation types https://github.com/jfrog/build-info/pull/675

- Bug fix - Sha256 being set to literal "SHA-256" in build-info json https://github.com/jfrog/build-info/pull/669

3.17.2 (19 October 2022)

- Bug fix - Pom doesn't get deployed using Maven Install plugin 3+ https://github.com/jfrog/build-info/pull/670

3.17.1 (28 September 2022)

- Bug fix - Add CopyOnWriteArrayList to SetModules in buildInfoDeployer https://github.com/jfrog/jenkins-artifactory-plugin/pull/696

- Bug fix - Remove Cause.UserIdCause https://github.com/jfrog/jenkins-artifactory-plugin/pull/699

- Bug fix - Support Docker module ID with slash https://github.com/jfrog/build-info/pull/666

3.17.0 (21 July 2022)

- Support JFrog Projects in Gradle/Generic jobs - https://github.com/jfrog/jenkins-artifactory-plugin/pull/687

3.16.3 (4 July 2022)

- Bugfix - Broken AQL request when build is not defined in FilesGroup - https://github.com/jfrog/build-info/pull/650

- Bugfix - Usage report fails with access tokens - https://github.com/jfrog/jenkins-artifactory-plugin/pull/669

- Bugfix - Create workspace directories if missing - https://github.com/jfrog/jenkins-artifactory-plugin/pull/670

3.16.2 (24 April 2022)

- Bug fix - Calling MavenDescriptor.transform() results in java.lang.StackOverflowError - https://github.com/jfrog/jenkins-artifactory-plugin/pull/656

- Bug fix - NoSuchMethodError in Maven builds - https://github.com/jfrog/build-info/pull/633

- Bug fix - Fails to fetch Promotion Plugins - https://github.com/jfrog/build-info/pull/635

- Bug fix - When project provided, "projectKey" query parameter should be added to build info URL - https://github.com/jfrog/build-info/pull/631

- Bug fix - Incorrect Docker manifest path when collecting build-info - https://github.com/jfrog/build-info/pull/643

- Bug fix - Warning about missing Git branch should be in debug level - https://github.com/jfrog/build-info/pull/632

3.16.1 (20 March 2022)

- Bug fix - credentials not loaded in global configuration - https://github.com/jfrog/jenkins-artifactory-plugin/pull/650

3.16.0 (16 March 2022)

- Maven - Support projects in UI jobs - https://github.com/jfrog/jenkins-artifactory-plugin/pull/633

- Maven - Deploy only in mvn install/deploy phases - https://github.com/jfrog/build-info/pull/626 & https://github.com/jfrog/build-info/pull/630

- Gradle - Support Gradle 8 - https://github.com/jfrog/jenkins-artifactory-plugin/pull/631

- Go - Filter out unused dependencies from build info - https://github.com/jfrog/build-info/pull/622

- Add Jenkins scoped credentials to credentials list - https://github.com/jfrog/jenkins-artifactory-plugin/pull/637

- Add information to the exception threw when a pipeline job fails - https://github.com/jfrog/jenkins-artifactory-plugin/pull/626

- Bug fix - Stay on step context Node when finding root workspace - https://github.com/jfrog/jenkins-artifactory-plugin/pull/616

- Bug fix - In some cases, the project parameter in Maven is ignored - https://github.com/jfrog/build-info/pull/618

- Bug fix - Distribution declarative steps should be unblocking - https://github.com/jfrog/jenkins-artifactory-plugin/pull/640

- Bug fix - In some cases, the build-info URL is wrong - https://github.com/jfrog/build-info/pull/618 & https://github.com/jfrog/build-info/pull/619

- Bug fix - Failed to save System configuration - https://github.com/jfrog/jenkins-artifactory-plugin/pull/642

- Bug fix - in some cases, Docker module name in build-info is wrong - https://github.com/jfrog/build-info/pull/617

3.15.4 (6 February 2022)

- Bug fix - NoClassDefFoundError when running rtDockerPush - https://github.com/jfrog/jenkins-artifactory-plugin/pull/629

- Bug fix - Can't promote builds with projects - https://github.com/jfrog/jenkins-artifactory-plugin/pull/628

3.15.3 (26 January 2022)

- Bug fix - Signature of AmazonCorrettoCryptoProvider couldn't found - https://github.com/jfrog/build-info/pull/609

3.15.2 (20 January 2022)

- Add SHA2 to upload files- https://github.com/jfrog/jenkins-artifactory-plugin/pull/615

- Bug fix - Add 'localpath' to artifact - https://github.com/jfrog/build-info/pull/606

3.15.1 (6 January 2022)

- Calculate SHA2 in builds - https://github.com/jfrog/build-info/pull/598

- Bug fix - Fix possible NullPointerException in getDeployableArtifactPropertiesMap - https://github.com/jfrog/jenkins-artifactory-plugin/pull/609

- Bug fix - Remove JGit dependency - https://github.com/jfrog/build-info/pull/600

3.15.0 (3 January 2022)

- Add support to VCS branch and VCS message - https://github.com/jfrog/jenkins-artifactory-plugin/pull/603

- Add VCS properties on late deploy artifacts - https://github.com/jfrog/jenkins-artifactory-plugin/pull/604

- Bug fix - HasSnapshot always returns true - https://github.com/jfrog/jenkins-artifactory-plugin/pull/606

3.14.2 (7 December 2021)

- Exclude common-lang2.4 dependency - https://github.com/jfrog/jenkins-artifactory-plugin/pull/591

3.14.1 (6 December 2021)

- Maven builds now also deploy to Artifactory using the ‘deploy’ phase - https://github.com/jfrog/build-info/commit/e9341d8f85ce3959fe90a3c143b6a40ed843074e

- Add gradle-enterprise-maven-extension-1.11.1.jar to mvn classpath - https://github.com/jfrog/jenkins-artifactory-plugin/pull/581

- Bug fix - Build-info ignores duplicate artifacts checksum - https://github.com/jfrog/jenkins-artifactory-plugin/pull/571

- Upgrade common-lang2.4 to common-lang3 - https://github.com/jfrog/jenkins-artifactory-plugin/pull/567

3.13.2 (23 September 2021)

- Bug fix - Xray Scan generates an exception - https://github.com/jfrog/build-info/pull/554

3.13.1 (2 September 2021)

- Bug fix - NPE in Gradle jobs when deployer is empty - https://github.com/jfrog/jenkins-artifactory-plugin/pull/554

- Bug fix - NPE in Gradle when build info was not generated - https://github.com/jfrog/jenkins-artifactory-plugin/pull/552

- Bug fix - NPE in extractVcs when running on the master - https://github.com/jfrog/jenkins-artifactory-plugin/pull/549

3.13.0 (17 August 2021)

- Show deployed Gradle artifacts - https://github.com/jfrog/jenkins-artifactory-plugin/pull/538

- Improved build scan table - https://github.com/jfrog/build-info/pull/545

- Bug fix - Autofill JFrog servers get triggered for the wrong server-id - https://github.com/jfrog/jenkins-artifactory-plugin/pull/534

- Bug fix - Go jobs don't run on Docker images when they should - https://github.com/jfrog/jenkins-artifactory-plugin/pull/544

- Bug fix - Go jobs don't collect environment variables when they should - https://github.com/jfrog/jenkins-artifactory-plugin/pull/544

3.12.5 (20 July 2021)

- Bug fix - Exception raised when workflow - multibranch plugin is not installed - https://github.com/jfrog/build-info/pull/530

- Bug fix - Fail to deserialize deployable artifacts - https://github.com/jfrog/build-info/pull/537

- Gradle - Skip uploading JAR if no jar produced in build - https://github.com/jfrog/build-info/pull/538

- Make download headers comparisons case insensitive - https://github.com/jfrog/build-info/pull/539

3.12.4 (7 July 2021)

- Bug fix - Save JFrog instances for the first time throws NPE - https://github.com/jfrog/build-info/pull/52 7

3.12.3 (5 July 2021)

- Bug fix - ReportUsage throws an exception for Artifactory version 6.9.0 - https://github.com/jfrog/build-info/pull/525

- Bug fix - BuildInfo link is broken if contains special characters - https://github.com/jfrog/jenkins-artifactory-plugin/pull/525

3.12.1 (30 Jun 2021)

- Add new JFrog Distribution commands - https://github.com/jfrog/jenkins-artifactory-plugin/pull/518

- Support collecting build-info for Docker images created by Kaniko and JIB - https://github.com/jfrog/jenkins-artifactory-plugin/pull/503

- Support Maven wrapper - https://github.com/jfrog/jenkins-artifactory-plugin/pull/482

- Add project option to scan build - https://github.com/jfrog/jenkins-artifactory-plugin/pull/517

- Support multibranch Artifactory trigger - https://github.com/jfrog/jenkins-artifactory-plugin/pull/507

- Bug fix - Legacy patterns doesn't display defined instances - https://github.com/jfrog/jenkins-artifactory-plugin/pull/510

- Bug fix - Link to BuildInfo page gets encoding twice - https://github.com/jfrog/jenkins-artifactory-plugin/pull/516

- Bug fix - In some cases, NoSuchMethodError raised using IOUtils.toString - https://github.com/jfrog/build-info/pull/516

3.11.4 (31 May 2021)

- Bug fix - Error when trying to download an empty (zero bytes size) file - https://github.com/jfrog/build-info/pull/507

- Bug fix - Deploy file doesn't print full URL in the log output - https://github.com/jfrog/build-info/pull/509

3.11.3 (29 May 2021)

- Bug fix - Artifactory servers are missing in Jenkins dropdown list - https://github.com/jfrog/jenkins-artifactory-plugin/pull/489

- Bug fix - Ignore missing fields while deserialize HTTP response - https://github.com/jfrog/build-info/pull/502

- Bug fix - Build retention service sends an empty body - https://github.com/jfrog/build-info/pull/504

- Bug fix - Artifactory Trigger cannot deserialize instance ItemLastModified - https://github.com/jfrog/build-info/pull/503

3.11.2 (26 May 2021)

- Bug fix - Set bypassProxy in the configuration page fails https://github.com/jfrog/jenkins-artifactory-plugin/pull/486

3.11.1 (25 May 2021)

- Gradle - Improve Gradle transitive dependency collection - https://github.com/jfrog/build-info/pull/498

- Bug fix - Compatibility with JCasC fails - https://github.com/jfrog/jenkins-artifactory-plugin/pull/478

3.11.0 (19 May 2021)

- Update commons-codec and commons-io - https://github.com/jfrog/jenkins-artifactory-plugin/pull/462

- Add JFrog platform URL to Jenkins configuration page - https://github.com/jfrog/jenkins-artifactory-plugin/pull/455

- Add support for Artifactory Projects - https://github.com/jfrog/jenkins-artifactory-plugin/pull/449

- Add support for npm 7.7 - https://github.com/jfrog/build-info/pull/484

- Add support for NuGet V3 protocol - https://github.com/jfrog/build-info/pull/479 & https://github.com/jfrog/build-info/pull/494

- Bug fix - Gradle init script with space in path causes failure - https://github.com/jfrog/jenkins-artifactory-plugin/pull/469

- Bug fix - env doesn't collected in npm, Go, Pip, and NuGet builds - https://github.com/jfrog/jenkins-artifactory-plugin/pull/468

3.10.6 (23 Mar 2021)

- Bug fix - root workspace detection from flow - https://github.com/jfrog/jenkins-artifactory-plugin/pull/432

- Bug fix - tests for upload files with props omit multiple slashes - https://github.com/jfrog/jenkins-artifactory-plugin/pull/436

- Bug fix - Legacy patterns in existing Freestyle jobs are messed-up - https://github.com/jfrog/jenkins-artifactory-plugin/issues/434

- Bug fix - upload files with props omit multiple slashes - https://github.com/jfrog/build-info/issues/460

- Bug fix - Git-collect-issues should ignore errors when the revision of the previous build info doesn't exist - https://github.com/jfrog/build-info/issues/457

- Add usage report for pipeline APIs - https://github.com/jfrog/jenkins-artifactory-plugin/pull/441

- Populate requestedBy field in NPM build-info - https://github.com/jfrog/build-info/pull/446

- Populate requestedBy field in Gradle build-info - https://github.com/jfrog/build-info/pull/454

3.10.5 (25 Feb 2021)

3.10.4 (25 Jan 2021)

- Legacy patterns option removed from newly created Freestyle jobs

- Support a new ALL_PUBLICATIONS option bt gradle pipeline deployer API

- Bug fix - java.lang.UnsatisfiedLinkError

- Bug fix - Conan - incorrect quotation marks

- Bug fix - Allow rtBuildInfo to configure existing server configuration

- Bug fix - Freestyle jobs UI crashes following recent changes in Jenkins

3.10.3 (3 Jan 2021)

- Bug fix ( HAP-1439)

3.10.2 (3 Jan 2021)

- Bug fix ( HAP-1438)

3.10.1 (29 December 2020)

- Bug fixes and improvements ( HAP-1389, HAP-1417, HAP-1419, HAP-1420, HAP-1421, HAP-1422, HAP-1423, HAP-1424, HAP-1425, HAP-1429, HAP-1430, HAP-1431, HAP-1432, HAP-1433, HAP-1434, HAP-1435)

3.10.0 (1 December 2020)

- New "npm ci" pipelines API ( HAP-1338)

- Support for build-info aggregation across different agents ( HAP-1412)

- New build scan summary table added to the build log ( HAP-1413)

- Bug fixes ( HAP-1415, HAP-1416)

3.9.1 (25 November 2020)

- File-Specs: Support wildcard in repositories, Add new 'exclusions' field which allows excluding repositories. ( HAP-1409)

- Bug fixes ( HAP-1401, HAP-1408, HAP-1410, HAP-1411)

3.9.0 (27 October 2020)

- Support Docker pull ( HAP-1397)

- Fix and improve interactive promotions UI ( HAP-1394)

- Get Artifactory trigger path which caused the build ( HAP-1246)

- Breaking change - BuildInfo.getArtifacts() Api returns the artifact's remote path without repository ( HAP-1426).

- Bug fixes ( HAP-1398, HAP-1384, HAP-1399, HAP-1385, HAP-1382, HAP-1396, HAP-1392, HAP-1391, HAP-1390, HAP-1379, HAP-1395)

3.8.1 (28 August 2020)

- Bug fix ( HAP-1378)

3.8.0 (17 August 2020)

- Breaking change - Push / pull docker image steps use as a separate Java process ( HAP-1375)

- Define Artifactory Trigger using pipeline script ( HAP-1373)

- Support for NuGet & .NET Core CLI in scripted and declarative pipelines ( HAP-1370)

- Support for Python in scripted and declarative pipelines ( HAP-1369)

- Allow defining Gradle Publications in scripted and declarative pipelines ( HAP-1367)

- Display the list of deployed artifacts on the job summary page for maven builds ( HAP-1346)

- Support for JCasC (Jenkins Configuration as Code) ( HAP-1092)

- Bug fixes ( HAP-1368, HAP-1372)

3.7.2 (7 July 2020)

3.7.0 (25 June 2020)

- Integration with JFrog Pipelines ( HAP-1348)

- Declarative pipeline support for Conan ( HAP-1360)

- Avoid assigning builds a FAILURE result ( HAP-1357)

3.6.2 (4 May 2020)

3.6.1 (19 Mar 2020)

3.6.0 (9 Mar 2020)

- Parallel maven and gradle deployments ( HAP-1308)

- Build-info module name customisation for generic, npm and go builds ( HAP-1259)

- Docker foreign layers support ( HAP-1314)

- Jenkins core API bump ( HAP-1312)

- Support the conan client's verify SSL option ( HAP-1309)

- Fixes and improvements ( HAP-1313, HAP-1311, HAP-1305, HAP-1304, HAP-1303, HAP-1299, HAP-1297, HAP-1295, HAP-1290, HAP-1286, HAP-1283, HAP-1286, HAP-1286, HAP-1395)

3.5.0 (30 Dec 2019)

- New Go pipeline APIs ( HAP-1272)

- Support access token auth with Artifactory ( HAP-1271)

- File Specs - support for sorting. ( HAP-1215)

- Gradle pipeline - support defining snapshot and release repositories. ( HAP-1174)

- Replace the usage of gradle's maven plugin with the maven-publish plugin. ( HAP-1096)

- NPM pipeline - allow running inside a container. ( HAP-1261)

- New Xray Scan Report icon. ( HAP-1274)

- Bug fixes ( HAP-1265, HAP-1264, HAP-1250, HAP-1240, HAP-1243, HAP-954, HAP-1268)

3.4.1 (23 Sep 2019)

3.4.0 (5 Sep 2019)

- Allow adding projects issues to the build-info in pipeline jobs ( HAP-1231)

- Attach vcs.url and vcs.revision to files uploaded from a pipeline job ( HAP-1233)

- Conan remote.add pipeline API - support "force" and "remoteName" ( HAP-1232)

- Bug fixes ( HAP-1230, HAP-1229, HAP-1225, HAP-1224, HAP-1219, HAP-1218, HAP-1214, HAP-1210, HAP-1209, HAP-1190)

3.3.2 (2 July 2019)

- Change Xray connection timeout to 300 seconds ( HAP-1213)

- Add psw to the exclude environment variables list of pipeline jobs only. ( HAP-1212)

- Bug fixes ( HAP-1204, HAP-1205)

3.3.0 (17 June 2019)

- Declarative pipeline API for Xray build scan ( HAP-1175)

- Declarative pipeline API for docker push ( HAP-1201)

- Bug fix ( HAP-1200)

3.2.4 (5 June 2019)

3.2.3 (4 June 2019)

3.2.2 (31 Mar 2019)

- Bug fixes & Improvements ( HAP-1150, HAP-1151, HAP-1157, HAP-1158, HAP-1160, HAP-1164, HAP-1168, HAP-1169)

3.2.1 (20 Feb 2019)

- Bug fix ( HAP-1156)

3.2.0 (18 Feb 2019)

- New Set/Delete Properties step in generic pipeline ( HAP-1153)

- Add an option to get a list of all downloaded artifacts ( HAP-1114)

3.1.2 (11 Feb 2019)

- Bug fix ( HAP-1146)

3.1.1 (10 Feb 2019)

- Add the ability to control the number of parallel uploads using File Specs ( HAP-1085)

- Bug fixes & Improvements ( HAP-1116, HAP-1136, HAP-1137, HAP-1140, HAP-1143, HAP-1144, HAP-1145)

3.1.0 (16 Jan 2019)

- Support for NodeJS plugin in npm builds ( HAP-1127)

- Support for Declarative syntax in npm builds ( HAP-1128)

- Bug fixes & Improvements ( HAP-1130, HAP-1132, HAP-1133, HAP-1134)

3.0.0 (31 Dec 2018)

- Upgrade to Java 8 - Maven, Gradle and Ivy builds no longer support JDK 7

- Support for Declarative syntax in Generic Maven and Gradle builds ( HAP-1093)

- Support for npm in scripted pipeline ( HAP-1044)

- Support for Artifactory trigger ( HAP-1012)

- New Fail No Op flag in generic pipeline ( HAP-1123)

- Breaking changes: Removal of deprecated features ( HAP-1119)

- Bug fixes & Improvements ( HAP-1120, HAP-1121, HAP-1122, HAP-1113, HAP-1112, HAP-1110, HAP-1102, HAP 1098, HAP-1090, HAP-1124)

2.16.2 (9 Jul 2018)

- Docker build-info performance improvement ( HAP-1075)

- Bug fixes ( HAP-1057, HAP-1076, HAP-1083, HAP-1086, HAP-1087)

2.16.1 (3 May 2018)

- Support "proxyless" configuration for docker build-info ( HAP-1061)

- Bug fixes ( HAP-1043, HAP-1068, HAP-1070)

2.16.0 (1 May 2018)

- File Specs - Deployment of Artifacts is now parallel ( HAP-1066)

- Conan build with Docker improvement ( HAP-1055)

- Bug fixes & improvements ( HAP-1000, HAP-1049, HAP-1058, HAP-1062, HAP-1065)

2.15.1 (1 Apr 2018)

2.15.0 (14 Mar 2018)

- Support Jenkins JEP-200 changes ( HAP-1032)

- File Specs - File larger than 5mb are now downloaded concurrently ( HAP-1041)

- Add support for Jenkins job-DSL ( HAP-1028)

- Validate git credentials early in Artifactory Release Staging ( HAP-1027)

- Bug fixes & improvements ( HAP-1042, HAP-1039, HAP-1031, HAP-1030, HAP-1029, HAP-1026, HAP-1025, HAP-1024, HAP-1021, HAP-1019, HAP-999, HAP-970)

2.14.0 (28 Dec 2017)

- Docker build info without the Build Info Proxy ( HAP-1016)

- Bug fixes ( HAP-1001, HAP-1003, HAP-1007, HAP-1008, HAP-1009, HAP-1010, HAP-1013)

2.13.1 (26 Oct 2017)

2.13.0 (27 Sep 2017)

- Support JFrog DSL from within Docker containers ( HAP-937)

- Release Staging API - Send release and queue metadata back as JSON in the response body ( HAP-971)

- Allow adding properties in a pipeline docker push ( HAP-974, HAP-977)

- Support pattern exclusion in File Specs ( HAP-985)

- File specs AQL optimizations ( HAP-990)

- Bug fixes ( HAP-961, HAP-962, HAP-964, HAP-969, HAP-972, HAP-978, HAP-980, HAP-981, HAP-983, HAP-988, HAP-991)

2.12.2 (27 Jul 2017)

2.12.1 (11 Jul 2017)

2.12.0 (29 Jun 2017)

- Pipepine - Allow separation of build and deployment for maven and gradle ( HAP-919)

- Git commit information as part of Build Info ( HAP-920)

- Support asynchronous build retention ( HAP-934)

- Support archive extraction using file specs ( HAP-942)

- Bug fixes ( HAP-933, HAP-929, HAP-912, HAP-941, HAP-940, HAP-935, HAP-938)

2.11.0 (17 May 2017)

- Support distribution as part of the Pipeline DSL ( HAP-908)

- Compatibility with JIRA Plugin 2.3 ( HAP-928)

- Bug fixes ( HAP-915, HAP-917, HAP-927)

2.10.4 (27 Apr 2017)

2.10.3 (5 Apr 2017)

- Support interactive promotion in Pipeline jobs ( HAP-891)

- Conan commands support for Pipeline jobs ( HAP-899)

- Improve build-info links for multi build Pipeline jobs ( HAP-901)

- Bug fixes ( HAP-556, HAP-855, HAP-887, HAP-897)

2.9.2 (5 Mar 2017)

2.9.1 (1 Feb 2017)

2.9.0 (7 Jan 2017)

- Add to file spec the ability to download artifact by build name and number ( HAP-865)

- Support Release Management as part of the Pipeline DSL ( HAP-797)

- Capture docker build-info from all agents proxies ( HAP-868)

- Support for Xray scan build feature ( HAP-866)

- Support customised build name ( HAP-869)

- Change file Specs pattern ( HAP-876)

- Bug fixes ( HAP-872, HAP-873, HAP-870, HAP-856, HAP-862)

2.8.2 (6 Dec 2016)

- Support promotion fail fast ( HAP-803)

- Enable setting the JDK for Maven/Gradle Pipeline API ( HAP-848)

- Support environment variables within Pipeline specs ( HAP-849)

- Improve release staging with git ( HAP-842)

- Bug fixes ( HAP-854, HAP-852, HAP-846, HAP-843, HAP-791, HAP-716, HAP-488)

2.8.1 (10 Nov 2016)

- Bug fix ( HAP-841)

2.8.0 (9 Nov 2016)

- Upload and download specs support to Jenkins generic jobs ( HAP-823)

- Pipeline docker enhancements ( HAP-834)

- Support matrix params as part of the Maven and Gradle Pipeline DSL ( HAP-835)

- Support credentials iD as part of the Docker Pipeline DSL ( HAP-838)

- Support reading download and upload specs from FS ( HAP-838)

- Bug fix ( HAP-836, HAP-830, HAP-829, HAP-822, HAP-824, HAP-816, HAP-828, HAP-826)

2.7.2 (25 Sep 2016)

- Build-info support for docker images within pipeline jobs ( HAP-818)

- Support for Maven builds within pipeline jobs ( HAP-759)

- Support for Gradle builds within pipeline jobs ( HAP-760)

- Support for Credentials plugin ( HAP-810)

- Bug fix ( HAP-723, HAP-802, HAP-804, HAP-815, HAP-816)

2.6.0 (7 Aug 2016)

- Pipeline support for build retention (discard old builds) (HAP-796)

- Pipeline support for build promotion, environment variables and system properties filtered collection ( HAP-787)

- Expose release version and next development release property as environment variable ( HAP-798)

- Bug fix ( HAP-772, HAP-762, HAP-795, HAP-796, HAP-799)

2.5.1 (30 Jun 2016)

- Bug fix ( HAP-771)

2.5.0 (9 Jun 2016)

- Pipeline support for Artifactory ( HAP-625, HAP-722)

- Support for credentials using inside a Folder with the Credentials Plugin ( HAP-742)

- Configure from the job a default release repository from configuration page ( HAP-688)

- Allowing override Git credentials at artifactory release staging page ( HAP-626)

- Bug fix ( HAP-754, HAP-752, HAP-747, HAP-736, HAP-726, HAP-722, HAP-715, HAP-712, HAP-704, HAP-695, HAP-688, HAP-671, HAP-642, HAP-639)

2.4.7 (12 Jan 2016)

- Bug fix ( HAP-678)

2.4.6 (13 Dec 2015)

- Bug fix ( HAP-674)

2.4.5 (6 Dec 2015)

2.4.4 (17 Nov 2015)

2.4.1 (6 Nov 2015)

- Bug fix ( HAP-660)

2.4.0 (2 Nov 2015)

- Use the Credentials Plugin;( HAP-491)

- FreeStyle Jobs - Support different Artifactory server for resolution and deployment;( HAP-616)

- Jenkins should write the Artifactory Plugin version to the build log and build-info json;( HAP-620)

- Bug fixes ( HAP-396, HAP-534, HAP-583, HAP-616, HAP-617, HAP-618, HAP-621, HAP-622, HAP-641, HAP-646)

2.3.1 (13 Jul 2015)

- Expose Release SCM Branch and Release SCM Tag as build variables;( HAP-606)

- Bug fixes ( HAP-397, HAP-550, HAP-576, HAP-593, HAP-603, HAP-604, HAP-605, HAP-609)

2.3.0 (01 Apr 2015)

- Push build to Bintray directly from Jenkins UI ( HAP-566)

- Multijob (plugin job) type not supported by Artifactory Plugin ( HAP-527)

- Support multi-configuration projects ( HAP-409)

- "Target-Repository" - need a dynamic parameter ( HAP-547)

- Bug fixes ( HAP-585, HAP-573, HAP-574, HAP-554, HAP-567)

2.2.7 (27 Jan 2015)

- Add resolve artifacts from Artifactory to the Free Style Maven 3 integration ( HAP-379)

- Bug fixes ( HAP-411, HAP-553, HAP-555)

2.2.5 (18 Dec 2014)

- Maven jobs - Record Implicit Project Dependencies and Build-Time Dependencies ( HAP-539)

- Possibility to refer to git url when using target remote for Release management ( HAP-525)

- Bug fixes ( HAP-537, HAP-542, HAP-535, HAP-528, HAP-516, HAP-507, HAP-484, HAP-454, HAP-538, HAP-523, HAP-548)

2.2.4 (21 Aug 2014)

- New Artifactory Release Management API ( HAP-503)

- Compatibility with Gradle 2.0 ( GAP-153)

- Job configuration page performance improvements ( HAP-492)

- Bug fixes ( HAP-485, HAP-499), HAP-502, HAP-508, HAP-509, HAP-301)

2.2.3 (10 Jun 2014)

-

Artifactory plugin is back to support Maven 2 ( HAP-459)

-

New feature, "Refresh Repositories" button, a convenient way to see your available repositories that exists in the configured Artifatory.

This feature also improves the Job page load response, and fixes the following bug ( HAP-483)

-

Supporting "Subversion plugin" version 2.+, on their compatibility with "Credentials plugin" ( HAP-486)

2.2.2 (21 May 2014)

- Split Resolution repository to Snapshot and Release ( HAP-442)

- Supporting Git plugin credentials ( HAP-479)

- Upgrading and also minimum supporting version of Git plugin 2.0.1 (recommended 2.0.4 for the credentials feature)

- Fix bug with Maven release plugin ( HAP-373)

- Adding Version Control Url property to the Artifactory Build Info JSON ( HAP-478)

- Bug fixes ( HAP-432, HAP-470)

2.2.1 (11 Nov 2013)

- Fix for IllegalArgumentException in Deployment when no deployment is defined in Job ( HAP-241)

2.2.0 (16 Oct 2013)

- Fix parent pom resolution issue ( HAP-236) from Jenkins 1.521

- Add support for maven 3.1.X

- Option to ignore artifacts that are not deploy because of include/exclude patterns from the build info ( HAP-444)

- Enable credentials configuration for repository listing per project ( HAP-430)

- Bug fixes

2.1.8 (26 Aug 2013)

- Fix migration to Jenkins 1.528 ( HAP-428)

2.1.7 (31 Jul 2013)

2.1.6 (24 Jun 2013)

- Fix plugin compatibility with Jenkins 1.519 ( HAP-418)

2.1.5 (23 Apr 2013)

- Black duck integration - Automatic Black duck Code-Center integration for open source license governance and vulnerability control ( HAP-394)

- Gradle 1.5 support for maven and ivy publishes - New 'artifactory-publish' plugin with fully supported for Ivy and Maven publications ( GAP-138)

- Bug fixes ( HAP-341, HAP-390, HAP-366, HAP-380)

2.1.4 (03 Feb 2013)

- Generic resolution interpolates environment variables ( HAP-352)

- Broken link issues ( HAP-362, HAP-371, HAP-360)

- Minor bug fixes

2.1.3 (14 Oct 2012)

- Support include/exclude patterns of captured environment variables ( BI-143)

- Bug fixes ( HAP-343, HAP-4, GAP-136)

2.1.2 (08 Aug 2012)

- Aggregating Jira issues from previous builds ( HAP-305)

- Bug fixes and improvements in generic deploy ( HAP-319, HAP-329)

2.1.1 (31 May 2012)

- NPE on Maven2 builds ( HAP-316)

2.1.0 (24 May 2012)

- Support for cloudbees 'Folder plugin' ( HAP-312, HAP-313)

- Minor bug fixes

2.0.9 (15 May 2012)

- Fix UI integration for Jenkins 1.463+ ( HAP-307)

- Minor bug fixes

2.0.8 (09 May 2012)

- Integration with Jira plugin ( HAP-297)

- Support build promotion for all build types ( HAP-264)

- Ability to leverage custom user plugins for staging and promotion ( HAP-271, HAP-272)

2.0.7 (20 Apr 2012)

- Generic artifact resolution (based on patterns or other builds output) to freestyle builds

- Optimized deploy - when a binary with the same checksum as an uploaded artifact already exists in the Artifactory storage, a new local reference will be created instead of reuploading the same content

- Bug fixes

2.0.6 (19 Mar 2012)

2.0.5 (08 Dec 2011)

- Compatible with Gradle 1.0-milestone-6

- Different Artifactory servers can be used for resolution and deployment ( HAP-203)

- Using the new Jenkins user cause class to retrieve triggering user. Requires Jenkins 1.428 or above ( HAP-254)

- Release management with Git work with the latest plugin. Requires Git plugin v1.1.13 or above ( HAP-259, JENKINS-12025)

- Build-info exports an environment variable

'BUILDINFO_PROPFILE'with the location of the generated build info properties file

2.0.4 (15 Aug 2011)

- Compatible with Jenkins 1.424+ ( HAP-223)

- Resolved Maven 3 deployments intermittently failing on remote nodes ( HAP-220)

- Target repository for staged builds is now respected for Maven 3 builds ( HAP-219)

- Remote builds no longer fail when "always check out a fresh copy" is used ( HAP-224)

2.0.3 (26 Jul 2011)

- Support for Git Plugin v1.1.10+ ( HAP-217)

- Native maven 3 jobs doesn't work if the Jenkins home path contains spaces ( HAP-218)

- Wrong tag URL is used when changing scm element during staged build ( HAP-215)

2.0.2 (07 Jul 2011)

- Support Jenkins version 1.417+ ( HAP-211)

2.0.1 (19 May 2011)

- Maven deployment from remote slaves - artifact deployment for Maven builds will run directly from a remote slave when artifact archiving is turned off, saving valuable bandwidth and time normally consumed by copying artifacts back to master for archiving and publishing (requires Maven 3.0.2 and above)

- Staging of Maven builds now correctly fails if snapshot dependencies are used in POM files ( HAP-183)

- All staging and promotion commit comments are now customizable ( HAP-181)

- Fix for staged builds failing on remote slaves ( HAP-189)

2.0.0 (4 May 2011)

- Release management with staging and promotion support

- Support for forcing artifact resolution in Maven 3 to go through Artifactory ( HAP-144)

- Isolated resolution for snapshot build chains for Maven and Gradle

- Ability to attach custom properties to published artifacts ( HAP-138)

- Improved Ant/Ivy integration

- Improved Gradle integration

- Support saving pinned builds (HAP-140)

- Option to delete deployed artifacts when synchronizing build retention ( HAP-161)

1.4.3 (7 Apr 2011)

- Compatible to work with Jenkins 1.405 ( HAP-159)

1.4.2 (27 Jan 2011)

1.4.1 (10 Jan 2011)

- Synchronize the build retention policy in Artifactory with Jenkins' build retention settings (requires Artifactory Pro) ( HAP-90)

1.4.0 (09 Jan 2011)

- Improved Gradle support

- Optimized checksum-based publishing with Artifactory 2.3.2+ that saves redeploying the same binaries

- Remote agent support for Gradle, Maven 3 and Ivy builds ( HAP-59, HAP-60, HAP-114)

- Configurable ivy/artifact patterns for Ivy builds ( HAP-120)

1.3.6 (21 Nov 2010)

- Allow specifying include/exclude patterns for published artifacts ( HAP-61).

- Support for custom Ivy/artifact patterns for Gradle published artifacts ( HAP-108).

1.3.5 (7 Nov 2010)

- Fixed integration with Jenkins maven release plugin. ( HAP-93)

- Global Artifactory credentials ( HAP-53)

- Auto preselect target release and snapshot repositories. ( HAP-98)

1.3.4 (28 Oct 2010)

- Fixed Gradle support

1.3.3 (21 Oct 2010)

- Update version of the Gradle extractor.

1.3.2 (19 Oct 2010)

- Support for running license checks on third-party dependencies and sending license violation email notifications ( HAP-91)

1.3.1 (19 Sep 2010)

- Maven 2 and Maven 3 support two target deploy repositories - releases and snapshots ( HAP-29)

- Maven 2 - Allow deployment even if the build is unstable ( HAP-77)

- Link to the build info next to each build that deployed build info ( HAP-80)

- Link to the builds list in the jobs' main page ( HAP-41)

- Allow skipping the creation and deployment of the build info ( HAP-47)

1.3.0 (26 Aug 2010)

- New support for Maven 3 Beta builds!

1.2.0 (26 Jul 2010)

- New support for Ivy builds! (many thanks to Timo Bingaman for adding the hooks to the the Jenkins Ivy Plugin)

- Supporting incremental builds ( HAP-52)

- Testing connection to Artifactory in the main configuration page

- Update Jenkins dependency to version 1.358

- Fixed HAP-51 - tar.gz files were deployed as .gz files

1.1.0 (09 Jun 2010)

- Added support for gradle jobs, see: http://www.jfrog.org/confluence/x/tYK5

- Connection timeout setting changed from milliseconds to seconds.

- Allow bypassing the http proxy ( JENKINS-5892)

1.0.7 (04 Mar 2010)

- Improved Artifactory client

- Another fix for duplicate pom deployments

- Sending parent (upstream) build information

- Displaying only local repositories when working with Artifactory 2.2.0+

1.0.6 (16 Feb 2010)

- Fixed a bug in the plugin that in some cases skipped deployment of attached artifacts

- In some cases, pom were deployed twice

- MD5 hash is now set on all files

- Dependency type is passed to the build info

1.0.5 (22 Jan 2010)

- Using Jackson as JSON generator for BuildInfo (will fix issues with Hudson version 1.340-1.341)

1.0.4 (15 Jan 2010)

- Accept Artifactory urls with slash at the end

- Fixed JSON object creation to work with Hudson 1.340

1.0.3 (07 Jan 2010)

- Using preemptive basic authentication

1.0.2 (22 Dec 2009)

- Configurable connection timeout

1.0.1 (16 Dec 2009)

- Fixed Artifactory plugin relative location (for images and help files)

1.0.0 (14 Dec 2009)

- First stable release

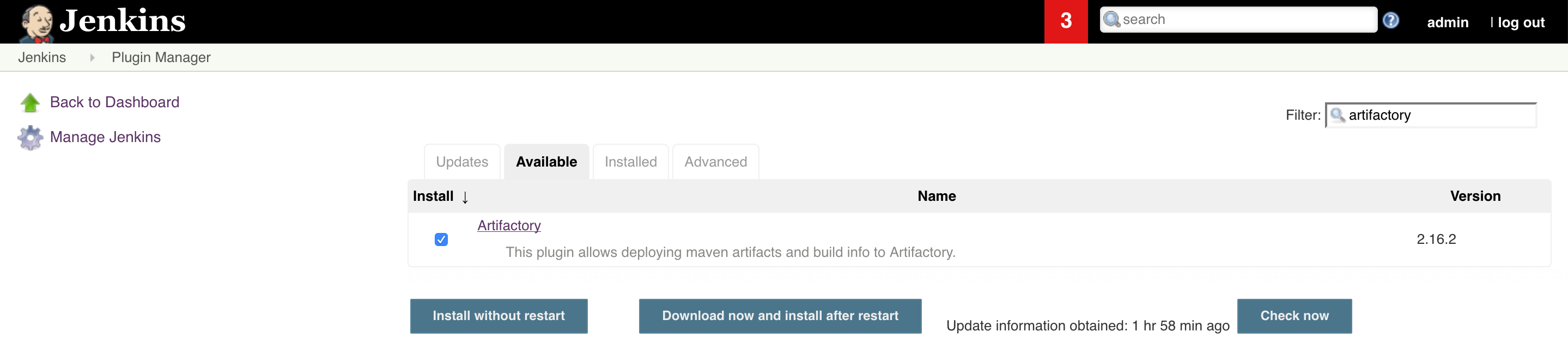

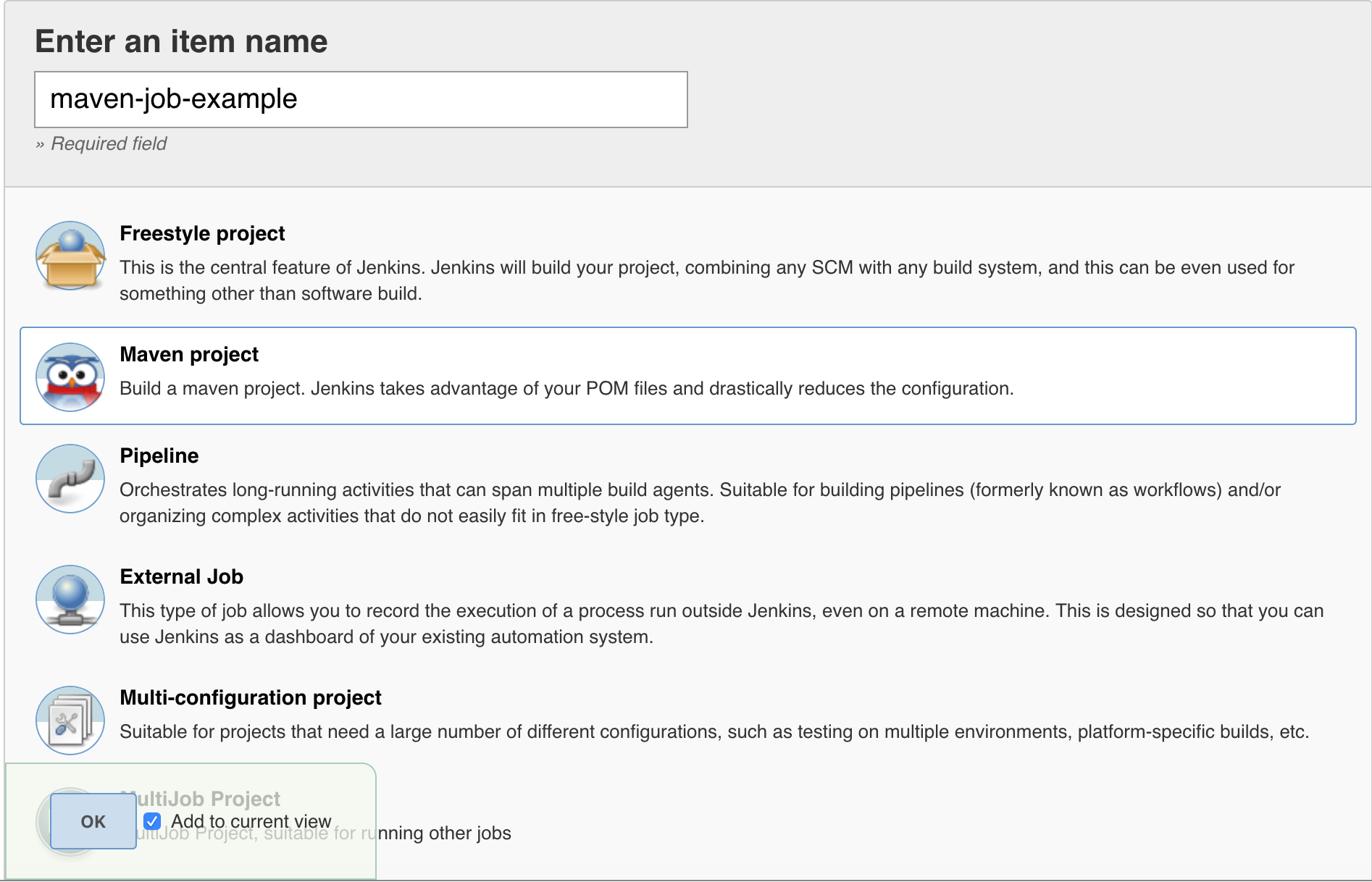

Configure Jenkins Artifactory Plug-in

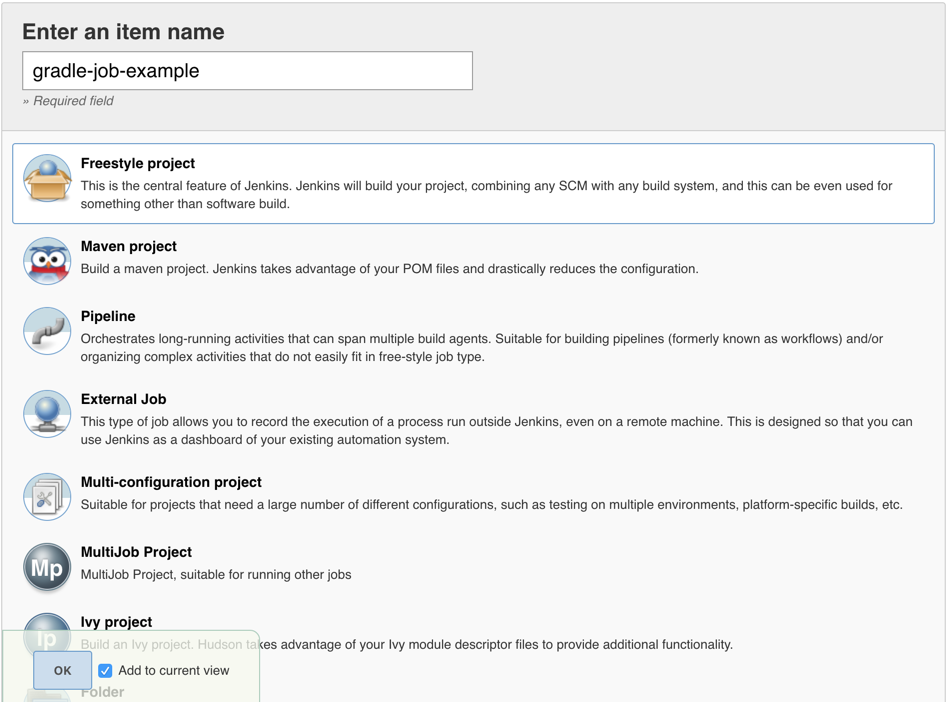

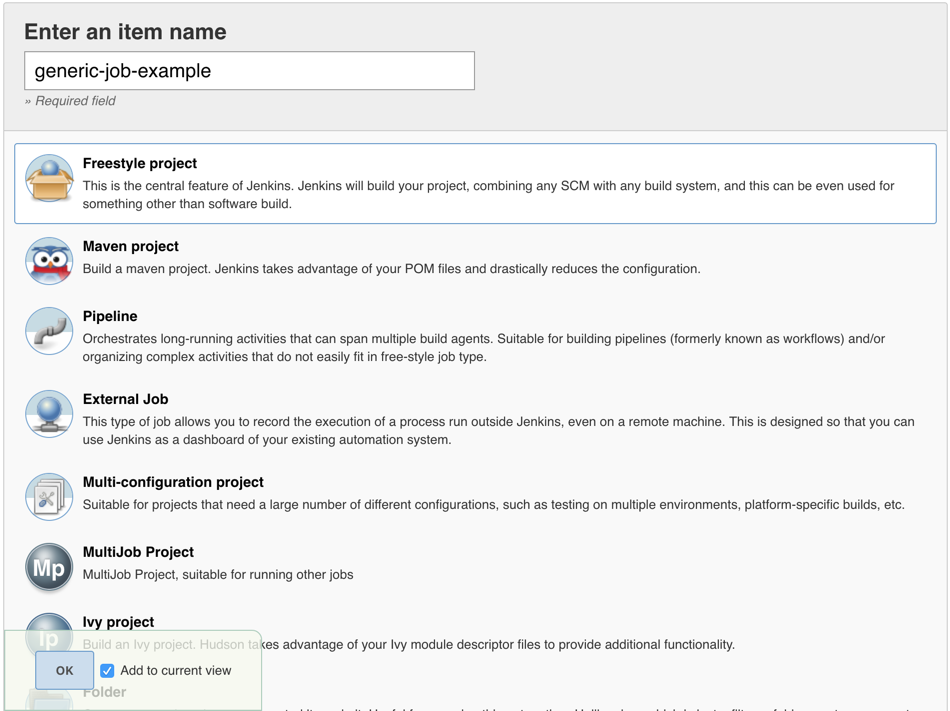

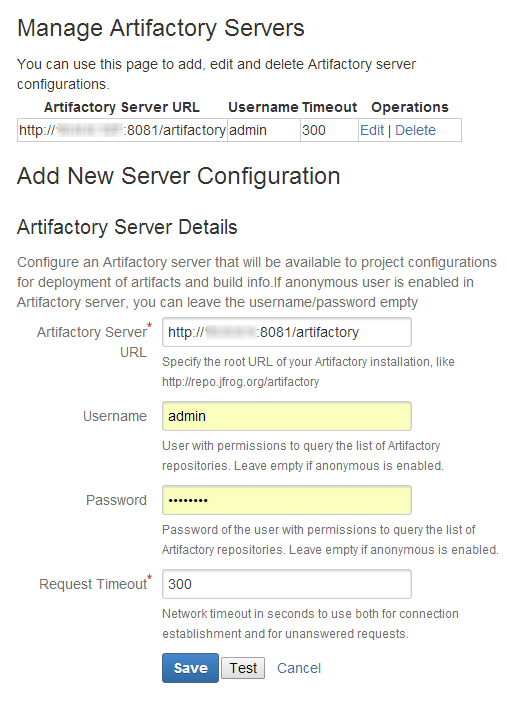

To install the Jenkins Artifactory Plugin, go to Manage Jenkins > Manage Plugins, click on the Available tab and search for Artifactory. Select the Artifactory plugin and click Download Now and Install After Restart.

Working With Pipeline Jobs in Jenkins

Pipeline jobs allow building a continuous delivery pipeline with Jenkins by creating a script that defines the steps of your build. For those not familiar with Jenkins Pipeline, please refer to the Pipeline Tutorial or the Getting Started With Pipeline documentation.

The Jenkins Artifactory Plugin adds pipeline APIs to support Artifactory operations as part of the build. You have the added option of downloading dependencies, uploading artifacts, and publishing build-info to Artifactory from a Pipeline script, in addition to integration with build tools and package managers.

Use Declarative or Scripted Syntax with Pipelines

Scripted and Declarative syntaxes are two different approaches to defining your pipeline jobs in Jenkins. When working with the Jenkins Artifactory plugin, be sure to choose either scripted or declarative. In other words, do not use declarative and scripted steps within a single pipeline. This will not work.

More information on the difference between the two can be found in the Jenkins Pipeline Syntax documentation.

Integration Benefits JFrog Artifactory and Jenkins-CI

Declarative Pipeline Syntax

Pipeline jobs simplify building continuous delivery workflows with Jenkins by creating a script that defines the steps of your build. For those not familiar with Jenkins Pipeline, please refer to the Pipeline Tutorial or the Getting Started With Pipeline documentation.

The Jenkins Artifactory Plugin supports Artifactory operations pipeline APIs. You have the added option of downloading dependencies, uploading artifacts, and publishing build-info to Artifactory from a pipeline script.

This page describes how to use declarative pipeline syntax with Artifactory. Declarative syntax is available from version 3.0.0 of the Jenkins Artifactory Plugin.

Tip

Scripted syntax is also supported. Read more about it here.

Examples

The Jenkins Pipeline Examples can help get you started creating your pipeline jobs with Artifactory.

Integration Benefits JFrog Artifactory and Jenkins-CI

Create an Artifactory Server Instance - Declarative Pipeline Syntax

There are two ways to tell the pipeline script which Artifactory server to use. You can either define the server details as part of the pipeline script, or define the server details in Manage | Configure System.

If you choose to define the Artifactory server in the pipeline, add the following to the script:

rtServer (

id: 'Artifactory-1',

url: 'http://my-artifactory-domain/artifactory',

// If you're using username and password:

username: 'user',

password: 'password',

// If you're using Credentials ID:

credentialsId: 'ccrreeddeennttiiaall',

// If Jenkins is configured to use an http proxy, you can bypass the proxy when using this Artifactory server:

bypassProxy: true,

// Configure the connection timeout (in seconds).

// The default value (if not configured) is 300 seconds:

timeout: 300

)You can also use a Jenkins Credential ID instead of the username and password:

The id property (Artifactory-1 in the above examples) is a unique identifier for this server, allowing us to reference this server later in the script. If you prefer to define the server in Manage | Configure System, you don't need to add the rtServerit definition as shown above. You can use the reference the server using its configured Server ID.

Upload and Download Files - Declarative Pipeline Syntax

To download the files, add the following closure to the pipeline script:

rtDownload (

serverId: 'Artifactory-1',

spec: '''{

"files": [

{

"pattern": "bazinga-repo/froggy-files/",

"target": "bazinga/"

}

]

}''',

// Optional - Associate the downloaded files with the following custom build name and build number,

// as build dependencies.

// If not set, the files will be associated with the default build name and build number (i.e the

// the Jenkins job name and number).

buildName: 'holyFrog',

buildNumber: '42',

// Optional - Only if this build is associated with a project in Artifactory, set the project key as follows.

project: 'my-project-key'

)In the above example, file are downloaded from the Artifactory server referenced by the Artifactory-1 server ID.

The above closure also includes a File Spec, which specifies the files which files should be downloaded. In this example, all ZIP files in the bazinga-repo/froggy-files/ Artifactory path should be downloaded into the bazinga directory on your Jenkins agent file system.

Uploading files is very similar. The following example uploads all ZIP files which include froggy in their names into the froggy-files folder in the bazinga-repo Artifactory repository.

rtUpload (

serverId: 'Artifactory-1',

spec: '''{

"files": [

{

"pattern": "bazinga/*froggy*.zip",

"target": "bazinga-repo/froggy-files/"

}

]

}''',

// Optional - Associate the uploaded files with the following custom build name and build number,

// as build artifacts.

// If not set, the files will be associated with the default build name and build number (i.e the

// the Jenkins job name and number).

buildName: 'holyFrog',

buildNumber: '42',

// Optional - Only if this build is associated with a project in Artifactory, set the project key as follows.

project: 'my-project-key'

)You can manage the File Spec in separate files, instead of adding it as part of the rtUpload and rtDownload closures. This allows managing the File Specs in a source control, possible with the project sources. Here's how you access the File Spec i the rtUpload. The configuration is similar in thertDownload closure****:****

rtUpload (

serverId: 'Artifactory-1',

specPath: 'path/to/spec/relative/to/workspace/spec.json',

// Optional - Associate the uploaded files with the following custom build name and build number.

// If not set, the files will be associated with the default build name and build number (i.e the

// the Jenkins job name and number).

buildName: 'holyFrog',

buildNumber: '42',

// Optional - Only if this build is associated with a project in Artifactory, set the project key as follows.

project: 'my-project-key'

)You can read about using File Specs for downloading and uploading files here.

If you'd like to fail the build in case no files are uploaded or downloaded, add the failNoOp property to the rtUpload or rtDownload closures as follows:

rtUpload (

serverId: 'Artifactory-1',

specPath: 'path/to/spec/relative/to/workspace/spec.json',

failNoOp: true,

// Optional - Associate the uploaded files with the following custom build name and build number.

// If not set, the files will be associated with the default build name and build number (i.e the

// the Jenkins job name and number).

buildName: 'holyFrog',

buildNumber: '42',

// Optional - Only if this build is associated with a project in Artifactory, set the project key as follows.

project: 'my-project-key'

)Set and Delete Properties on Files in Artifactory - Declarative Pipeline Syntax

When uploading files to Artifactory using the rtUpload closure, you have the option of setting properties on the files. These properties can be later used to filter and download those files.

In some cases, you may want want to set properties on files that are already in Artifactory. The way to this is very similar to the way you define which files to download or upload: A FileSpec is used to filter the filter on which the properties should be set. The properties to be set are sent outside the File Spec. Here's an example.

rtSetProps (

serverId: 'Artifactory-1',

specPath: 'path/to/spec/relative/to/workspace/spec.json',

props: 'p1=v1;p2=v2',

failNoOp: true

)In the above example:

- The serverId property is used to reference pre-configured Artifactory server instance as described in the Creating Artifactory Server Instance section.

- The specPath property include a path to a File Spec, which has a similar structure to the File Spec used for downloading files.

- The props property defines the properties we'd like to set. In the above example we're setting two properties - p1 and p2 with the v1 and v2 values respectively.

- The failNoOp property is optional. Setting it to true will cause the job to fail, if no properties have been set.

You also have the option of specifying the File Spec directly inside the rtSetProps closure as follows.

rtSetProps (

serverId: 'Artifactory-1',

props: 'p1=v1;p2=v2',

spec: '''{

"files": [{

"pattern": "my-froggy-local-repo",

"props": "filter-by-this-prop=yes"

}]}'''

)The rtDeleteProps closure is used to delete properties from files in Artifactory, The syntax is pretty similar to the rtSetProps closure. The only difference is that in the rtDeleteProps, we specify only the names of the properties to delete. The names are comma separated. The properties values should not be specified. Here's an example:

rtDeleteProps (

serverId: 'Artifactory-1',

specPath: 'path/to/spec/relative/to/workspace/spec.json',

props: 'p1,p2,p3',

failNoOp: true

)Similarly to the rtSetProps closure, the File Spec can be defined implicitly in inside the closure as shown here:

rtDeleteProps (

serverId: 'Artifactory-1',

props: 'p1,p2,p3',

spec: '''{

"files": [{

"pattern": "my-froggy-local-repo",

"props": "filter-by-this-prop=yes"

}]}'''

)Publish Build-Info to Artifactory - Declarative Pipeline Syntax

If you're not yet familiar with the build-info entity, read about it here.

Files that are downloaded by the rtDownload closure are automatically registered as the current build's dependencies, while files that are uploaded by the rtUpload closure are registered as the build artifacrts. The depedencies and artifacts are recorded locally and can be later published as build-info to Artifactory.

Here's how you publish the build-info to Artifactory:

rtPublishBuildInfo (

serverId: 'Artifactory-1',

// The buildName and buildNumber below are optional. If you do not set them, the Jenkins job name is used

// as the build name. The same goes for the build number.

// If you choose to set custom build name and build number by adding the following buildName and

// buildNumber properties, you should make sure that previous build steps (for example rtDownload

// and rtIpload) have the same buildName and buildNumber set. If they don't, then these steps will not

// be included in the build-info.

buildName: 'holyFrog',

buildNumber: '42',

// Optional - Only if this build is associated with a project in Artifactory, set the project key as follows.

project: 'my-project-key'

)If you set a custom build name and number as shown above, please make sure to set the same build name and number in the rtUpload or rtDownload closures as shown below. If you don't, Artifactory will not be able to associate these files with the build and therefore their files will not be displayed in Artifactory.

rtDownload (

serverId: 'Artifactory-1',

// Build name and build number for the build-info:

buildName: 'holyFrog',

buildNumber: '42',

// Optional - Only if this build is associated with a project in Artifactory, set the project key as follows.

project: 'my-project-key',

// You also have the option of customising the build-info module name:

module: 'my-custom-build-info-module-name',

specPath: 'path/to/spec/relative/to/workspace/spec.json'

)

rtUpload (

serverId: 'Artifactory-1',

// Build name and build number for the build-info:

buildName: 'holyFrog',

buildNumber: '42',

// Optional - Only if this build is associated with a project in Artifactory, set the project key as follows.

project: 'my-project-key',

// You also have the option of customising the build-info module name:

module: 'my-custom-build-info-module-name',

specPath: 'path/to/spec/relative/to/workspace/spec.json'

)Capture Environment Variables - Declarative Pipeline Syntax

To set the Build-Info object to automatically capture environment variables while downloading and uploading files, add the following to your script.

Note

It is important to place the rtBuildInfo closure before any steps associated with this build (for example, rtDownload and rtUpload), so that its configured functionality (for example, environment variables collection) will be invoked as part of these steps.

rtBuildInfo (

captureEnv: true,

// Optional - Build name and build number. If not set, the Jenkins job's build name and build number are used.

buildName: 'my-build',

buildNumber: '20',

// Optional - Only if this build is associated with a project in Artifactory, set the project key as follows.

project: 'my-project-key'

)By default, environment variables names which include "password", "psw", "secret", "token", or "key" (case insensitive) are excluded and will not be published to Artifactory.

You can add more include/exclude patterns with wildcards as follows:

rtBuildInfo (

captureEnv: true,

includeEnvPatterns: ['*abc*', '*bcd*'],

excludeEnvPatterns: ['*private*', 'internal-*'],

// Optional - Build name and build number. If not set, the Jenkins job's build name and build number are used.

buildName: 'my-build',

buildNumber: '20'

// Optional - Only if this build is associated with a project in Artifactory, set the project key as follows.

project: 'my-project-key'

)Trigger Build Retention - Declarative Pipeline Syntax

Build retention can be triggered when publishing build-info to Artifactory using the rtPublishBuildInfo closure. Setting build retention therefore should be done before publishing the build, by using the rtBuildInfo closure, as shown below. Please make sure to place the following configuration in the script before the rtPublishBuildInfo closure.

rtBuildInfo (

// Optional - Maximum builds to keep in Artifactory.

maxBuilds: 1,

// Optional - Maximum days to keep the builds in Artifactory.

maxDays: 2,

// Optional - List of build numbers to keep in Artifactory.

doNotDiscardBuilds: ['3'],

// Optional (the default is false) - Also delete the build artifacts when deleting a build.

deleteBuildArtifacts: true,

// Optional - Build name and build number. If not set, the Jenkins job's build name and build number are used.

buildName: 'my-build',

buildNumber: '20',

// Optional - Only if this build is associated with a project in Artifactory, set the project key as follows.

project: 'my-project-key'

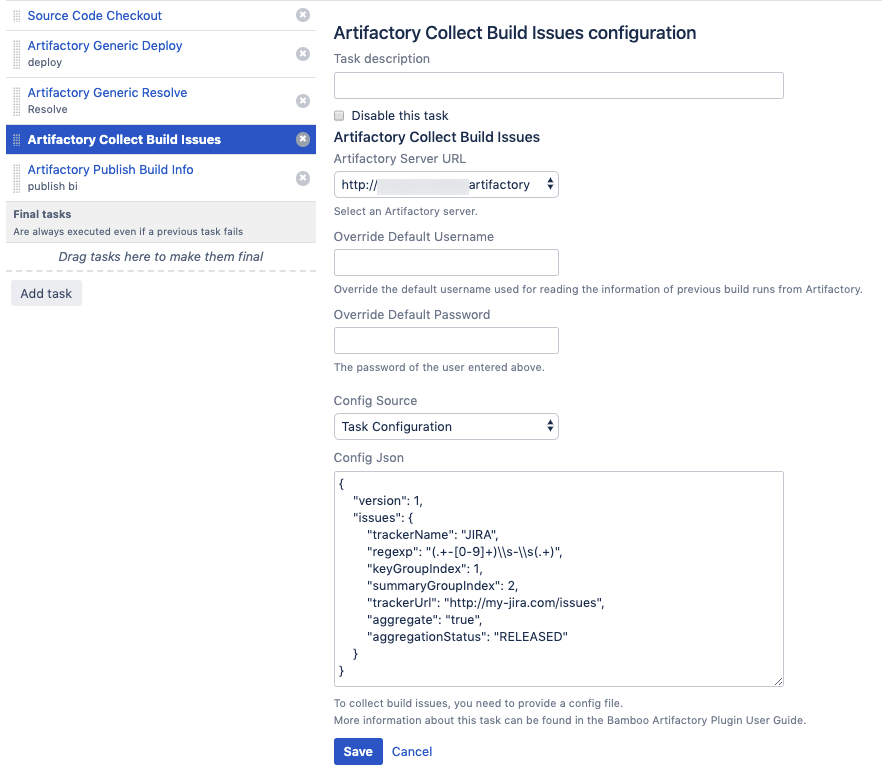

)Collect Build Issues - Declarative Pipeline Syntax

The build-info can include the issues which were handled as part of the build. The list of issues is automatically collected by Jenkins from the git commit messages. This requires the project developers to use a consistent commit message format, which includes the issue ID and issue summary, for example:

HAP-1364 - Replace tabs with spaces

The list of issues can be then viewed in the Builds UI in Artifactory, along with a link to the issue in the issues tracking system.

The information required for collecting the issues is provided through a JSON configuration. This configuration can be provided as a file or as a JSON string.

Here's an example for issues collection configuration.

{

'version': 1,

'issues': {

'trackerName': 'JIRA',

'regexp': '(.+-[0-9]+)\\s-\\s(.+)',

'keyGroupIndex': 1,

'summaryGroupIndex': 2,

'trackerUrl': 'http://my-jira.com/issues',

'aggregate': 'true',

'aggregationStatus': 'RELEASED'

}

}Configuration file properties:

Property name | Description |

|---|---|

Version | The schema version is intended for internal use. Do not change! |

trackerName | The name (type) of the issue tracking system. For example, JIRA. This property can take any value. |

trackerUrl | The issue tracking URL. This value is used for constructing a direct link to the issues in the Artifactory build UI. |

keyGroupIndex | The capturing group index in the regular expression used for retrieving the issue key. In the example above, setting the index to "1" retrieves HAP-1364 from this commit message: HAP-1364 - Replace tabs with spaces |

summaryGroupIndex | The capturing group index in the regular expression for retrieving the issue summary. In the example above, setting the index to "2" retrieves the sample issue from this commit message: HAP-1364 - Replace tabs with spaces |

aggregate | Set to true, if you wish all builds to include issues from previous builds. |

aggregationStatus | If aggregate is set to true, this property indicates how far in time should the issues be aggregated. In the above example, issues will be aggregated from previous builds, until a build with a RELEASE status is found. Build statuses are set when a build is promoted using the jfrog rt build-promote command. |

regexp | A regular expression used for matching the git commit messages. The expression should include two capturing groups - for the issue key (ID) and the issue summary. In the example above, the regular expression matches the commit messages as displayed in the following example: HAP-1364 - Replace tabs with spaces |

Here's how you set issues collection in the pipeline script.

rtCollectIssues (

serverId: 'Artifactory-1',

config: '''{

"version": 1,

"issues": {

"trackerName": "JIRA",

"regexp": "(.+-[0-9]+)\\s-\\s(.+)",

"keyGroupIndex": 1,

"summaryGroupIndex": 2,

"trackerUrl": "http://my-jira.com/issues",

"aggregate": "true",

"aggregationStatus": "RELEASED"

}

}''',

)In the above example, the issues config is embedded inside the rtCollectIssues closure. You also have the option of providing a file which includes the issues configuration. Here's how you do this:

rtCollectIssues (

serverId: 'Artifactory-1',

configPath: '/path/to/config.json'

)If you'd like add the issues information to a specific build-info, you can also provide build name and build number as follows:

rtCollectIssues (

serverId: 'Artifactory-1',

configPath: '/path/to/config'

buildName: 'my-build',

buildNumber: '20',

// Optional - Only if this build is associated with a project in Artifactory, set the project key as follows.

project: 'my-project-key'

)Note

To help you get started, we recommend using the Github Examples.

Aggregate Builds - Declarative Pipeline Syntax

The build-info published to Artifactory can include multiple modules representing different build steps. As shown earlier in this section, you just need to pass the same buildName and buildNumber to all the steps that need it (rtUpload for example).

What happens however if your build process runs on multiple machines or it is spread across different time periods? How do you aggregate all the build steps into one build-info?

When your build process runs on multiple machines or it is spread across different time periods, you have the option of creating and publishing a separate build-info for each segment of the build process, and then aggregating all those published builds into one build-info. The end result is one build-info which references other, previously published build-infos.

In the following example, our pipeline script publishes two build-info instances to Artifactory:

rtPublishBuildInfo (

serverId: 'Artifactory-1',

buildName: 'my-app-linux',

buildNumber: '1'

)

rtPublishBuildInfo (

serverId: 'Artifactory-1',

buildName: 'my-app-windows',

buildNumber: '1'

)At this point, we have two build-infos stored in Artifactory. Now let's create our final build-info, which references the previous two:

rtBuildAppend(

// Mandatory:

serverId: 'Artifactory-1',

appendBuildName: 'my-app-linux',

appendBuildNumber: '1',

// The buildName and buildNumber below are optional. If you do not set them, the Jenkins job name is used

// as the build name. The same goes for the build number.

// If you choose to set custom build name and build number by adding the following buildName and

// buildNumber properties, you should make sure that previous build steps (for example rtDownload

// and rtIpload) have the same buildName and buildNumber set. If they don't, then these steps will not

// be included in the build-info.

buildName: 'final',

buildNumber: '1'

)

rtBuildAppend(

// Mandatory:

serverId: 'Artifactory-1',

appendBuildName: 'my-app-windows',

appendBuildNumber: '1',

buildName: 'final',

buildNumber: '1'

)

// Publish the aggregated build-info to Artifactory.

rtPublishBuildInfo (

serverId: 'Artifactory-1',

buildName: 'final',

buildNumber: '1'

)If the published builds in Artifactory are associated with a project, you should add the project key to the rtBuildAppend and rtPublishBuildInfo steps as follows.

rtBuildAppend(

// Mandatory:

serverId: 'Artifactory-1',

appendBuildName: 'my-app-linux',

appendBuildNumber: '1',

buildName: 'final',

buildNumber: '1',

project: 'my-project-key'

)

rtBuildAppend(

// Mandatory:

serverId: 'Artifactory-1',

appendBuildName: 'my-app-windows',

appendBuildNumber: '1',

buildName: 'final',

buildNumber: '1',

project: 'my-project-key'

)

// Publish the aggregated build-info to Artifactory.

rtPublishBuildInfo (

serverId: 'Artifactory-1',

buildName: 'final',

buildNumber: '1',

project: 'my-project-key'

)Note

Build Promotion and Build scanning with Xray are currently not supporting aggregated builds.

Promote Builds in Artifactory - Declarative Pipeline Syntax

To promote a build between repositories in Artifactory, define the promotion parameters in the rtPromote closure For example:

rtPromote (

// Mandatory parameter

buildName: 'MK',

buildNumber: '48',

// Optional - Only if this build is associated with a project in Artifactory, set the project key as follows.

project: 'my-project-key',

// Artifactory server ID from Jenkins configuration, or from configuration in the pipeline script

serverId: 'Artifactory-1',

// Name of target repository in Artifactory

targetRepo: 'libs-release-local',

// Optional parameters

// Comment and Status to be displayed in the Build History tab in Artifactory

comment: 'this is the promotion comment',

status: 'Released',

// Specifies the source repository for build artifacts.

sourceRepo: 'libs-snapshot-local',

// Indicates whether to promote the build dependencies, in addition to the artifacts. False by default.

includeDependencies: true,

// Indicates whether to fail the promotion process in case of failing to move or copy one of the files. False by default

failFast: true,

// Indicates whether to copy the files. Move is the default.

copy: true

)Allow Interactive Promotion for Published Builds - Declarative Pipeline Syntax

The Promoting Builds in Artifactory section describes how your Pipeline script can promote builds in Artifactory. In some cases however, you'd like the build promotion to be performed after the build finished. You can configure your Pipeline job to expose some or all the builds it publishes to Artifactory, so that they can be later promoted interactively using a GUI.

When the build finishes, the promotion window will be accessible by clicking on the promotion icon, next to the build run. To enable interactive promotion for a published build, add the rtAddInteractivePromotion as shown below.

rtAddInteractivePromotion (

// Mandatory parameters

// Artifactory server ID from Jenkins configuration, or from configuration in the pipeline script

serverId: 'Artifactory-1',

buildName: 'MK',

buildNumber: '48',

// Only if this build is associated with a project in Artifactory, set the project key as follows.

project: 'my-project-key',

// Optional parameters

If set, the promotion window will display this label instead of the build name and number.

displayName: 'Promote me please',

// Name of target repository in Artifactory

targetRepo: 'libs-release-local

// Comment and Status to be displayed in the Build History tab in Artifactory

comment: 'this is the promotion comment',

status: 'Released',

// Specifies the source repository for build artifacts.

sourceRepo: 'libs-snapshot-local',

// Indicates whether to promote the build dependencies, in addition to the artifacts. False by default.

includeDependencies: true,

// Indicates whether to fail the promotion process in case of failing to move or copy one of the files. False by default

failFast: true,

// Indicates whether to copy the files. Move is the default.

copy: true

)You can add multiple _rtAddInteractivePromotion_closures, to include multiple builds in the promotion window.

Maven Builds with Artifactory - Declarative Pipeline Syntax

Maven builds can resolve dependencies, deploy artifacts and publish build-info to Artifactory.

Maven Compatibility

- The minimum Maven version supported is 3.3.9

- The deployment to Artifacts is triggered both by the deploy and install phases.

To run Maven builds with Artifactory from your Pipeline script, you first need to create an Artifactory server instance, as described in the _Creating an Artifactory Server Instance_section.

The next step is to define an rtMavenResolver closure, which defines the dependencies resolution details, and an rtMavenDeployer closure, which defines the artifacts deployment details. Here's an example:

rtMavenResolver (

id: 'resolver-unique-id',

serverId: 'Artifactory-1',

releaseRepo: 'libs-release',

snapshotRepo: 'libs-snapshot'

)

rtMavenDeployer (

id: 'deployer-unique-id',

serverId: 'Artifactory-1',

releaseRepo: 'libs-release-local',

snapshotRepo: 'libs-snapshot-local',

// By default, 3 threads are used to upload the artifacts to Artifactory. You can override this default by setting:

threads: 6,

// Attach custom properties to the published artifacts:

properties: ['key1=value1', 'key2=value2']

)As you can see in the example above, the resolver and deployer should have a unique ID, so that they can be referenced later in the script, In addition, they include an Artifactory server ID and the names of release and snapshot maven repositories.

Now we can run the maven build, referencing the resolver and deployer we defined:

rtMavenRun (