Filestore Selection

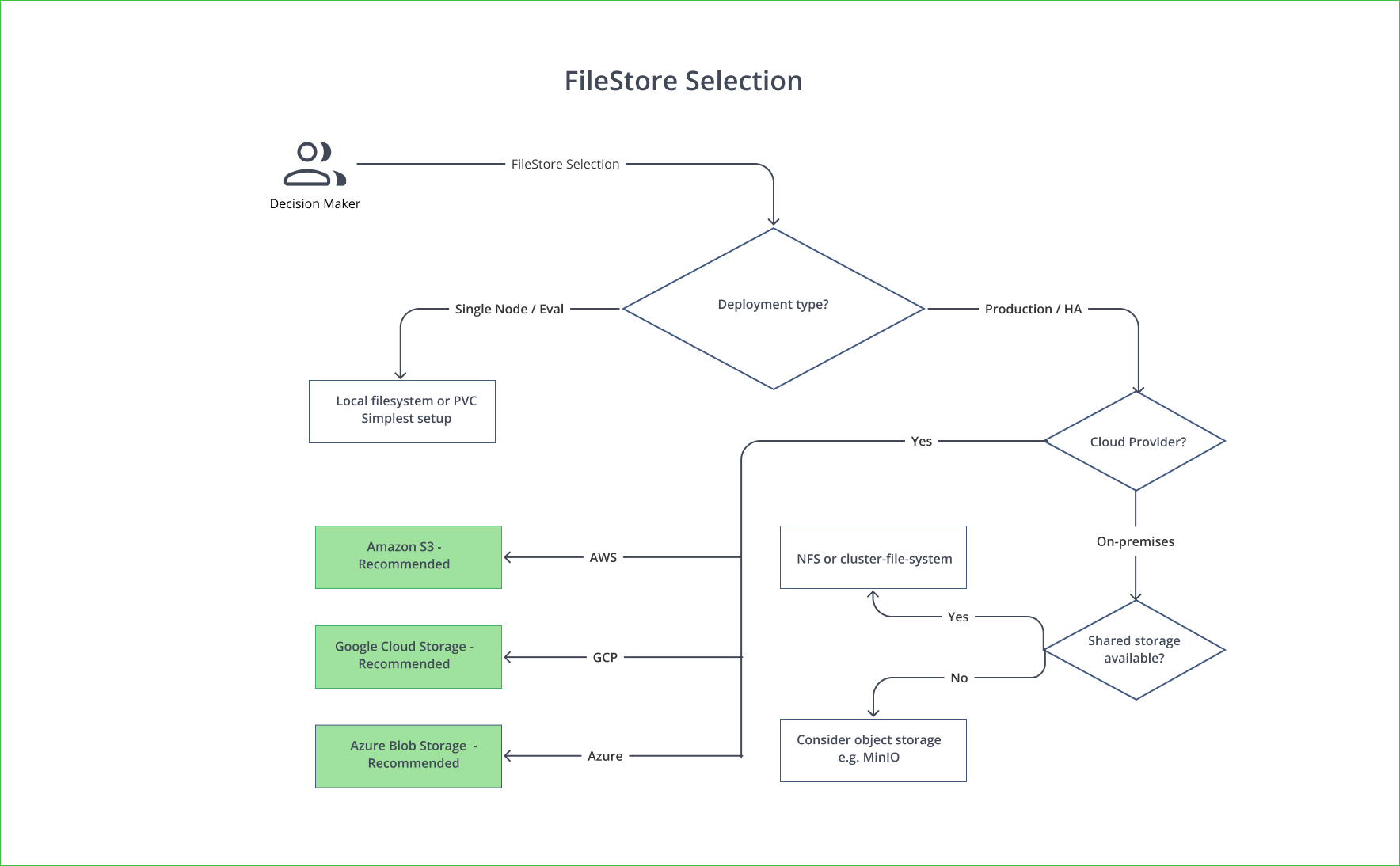

Choose object storage (S3/GCS/Azure), NFS, or local filesystem for Artifactory artifact binaries based on topology needs.

Choose the filestore backend for Artifactory artifact binaries; the right choice affects performance, scalability, and HA.

When To Use This Page

Use this page when defining binary storage before production deployment or HA rollout.

Recommendation

Use Object Storage For Production

Use object storage (S3, GCS, or Azure Blob) for production deployments. Object storage provides unlimited scalability, built-in durability, and is required for HA configurations where multiple Artifactory nodes share the same binary store.

Quick Decision

- Choose object storage for all production and HA deployments.

- Use NFS only when object storage is not available and your NFS performance is proven.

- Use local filesystem only for single-node evaluation and development.

Comparison

| Object Storage (S3/GCS/Azure Blob) | NFS / Shared Filesystem | Local Filesystem (PVC) | |

|---|---|---|---|

| Best for | Production, HA, cloud | Self-managed HA without object storage | Evaluation, single node |

| HA support | Yes — all nodes share the same bucket | Yes — all nodes mount the same NFS share | No — PVC is bound to one node |

| Scalability | Unlimited | Limited by NFS capacity | Limited by disk size |

| Durability | 99.999999999% (11 nines, S3) | Depends on NFS setup | Depends on disk/volume |

| Performance | High (supports direct upload/download) | Medium (depends on NFS latency) | High (local disk) |

| Cost | Pay per GB stored + requests | NFS infrastructure cost | Disk cost |

| Direct cloud upload/download | Yes (reduces Artifactory CPU load) | No | No |

| Maintenance | Managed by cloud provider | Requires NFS administration | Minimal |

When To Use Each Option

Object Storage (Production Recommended)

Use object storage when all of the following are true:

- You are deploying on AWS, GCP, or Azure (native integration)

- You need HA with multiple Artifactory nodes

- You want unlimited scalability without managing disk capacity

- You want to leverage direct cloud upload/download for better performance

| Cloud Provider | Service | Direct Upload |

|---|---|---|

| AWS | Amazon S3 | Yes (S3 direct upload) |

| GCP | Google Cloud Storage | Yes (GCS direct upload) |

| Azure | Azure Blob Storage | Yes (Azure direct upload) |

Keep Bucket And Cluster In One Region

The object storage bucket must be in the same region as your Artifactory deployment for acceptable latency and to avoid cross-region data transfer costs.

NFS / Shared Filesystem

Use NFS when all of the following are true:

- You are deploying in a self-managed environment without access to object storage

- You need HA but cannot use S3/GCS/Azure Blob

- Your NFS infrastructure provides sub-5ms latency and high throughput

Requirements:

- Type: SSD-backed

- Latency: Sub-millisecond to 5ms

- IOPS: 6000+

- Throughput: 1000 MB/sec+

NFS Performance Warning

NFS is not recommended for high-load environments due to latency sensitivity. If your NFS has inconsistent performance, artifacts may fail during upload or download.

Local Filesystem (Evaluation Only)

Use local filesystem when all of the following are true:

- You are running a single-node evaluation or development environment

- You do not need HA

- You are using the bundled database and default settings

Local Filesystem Is Not HA-Safe

Do not use local filesystem for HA. A local PVC is bound to a single Kubernetes node. Multiple Artifactory pods cannot share it.

Filestore Sharding

For additional durability and availability, Artifactory supports filestore sharding — distributing binaries across multiple storage volumes.

See Configure a Sharding Binary Provider for setup instructions.

Local Cache (All Deployments)

Regardless of the filestore backend, Artifactory uses a local cache (cache-fs) on each node's disk for frequently accessed artifacts. This significantly improves download performance.

| Spec | Recommendation |

|---|---|

| Type | SSD |

| Size | 500 GB+ |

| IOPS | 6000+ |

| Throughput | 1000 MB/sec+ |

Larger cache sizes improve download performance for hot artifacts. See Sizing for per-template recommendations.

For detailed filestore configuration, see Filestore Configuration.

Next Steps

- Finalize database strategy in Database Selection

- Continue implementation planning in Storage Planning

Updated 24 days ago